Kaist

Korean

Research News

College of Engineering News

-

Engineered Microbial Production of Grape Flavoring

(Image 1: Engineered bacteria that produce grape flavoring.) Researchers report a microbial method for producing an artificial grape flavor. Methyl anthranilate (MANT) is a common grape flavoring and odorant compound currently produced through a petroleum-based process that uses large volumes of toxic acid catalysts. Professor Sang-Yup Lee’s team at the Department of Chemical and Biomolecular Engineering demonstrated production of MANT, a naturally occurring compound, via engineered bacteria. The authors engineered strains of Escherichia coli and Corynebacetrium glutamicum to produce MANT through a plant-based engineered metabolic pathway. The authors tuned the bacterial metabolic pathway by optimizing the levels of AAMT1, the key enzyme in the process. To maximize production of MANT, the authors tested six strategies, including increasing the supply of a precursor compound and enhancing the availability of a co-substrate. The most productive strategy proved to be a two-phase extractive culture, in which MANT was extracted into a solvent. This strategy produced MANT on the scale of 4.47 to 5.74 grams per liter, a significant amount, considering that engineered microbes produce most natural products at a scale of milligrams or micrograms per liter. According to the authors, the results suggest that MANT and other related molecules produced through industrial processes can be produced at scale by engineered microbes in a manner that would allow them to be marketed as natural one, instead of artificial one. This study, featured at the Proceeding of the National Academy of Sciences of the USA on May 13, was supported by the Technology Development Program to Solve Climate Changes on Systems Metabolic Engineering for Biorefineries from the Ministry of Science and ICT. (Image 2. Overview of the strategies applied for the microbial production of grape flavoring.)

-

KAIST Identifies the Cause of Sepsis-induced Lung ..

(Professor Pilhan Kim from the Graduate School of Medical Science and Engineering) A KAIST research team succeeded in visualizing pulmonary microcirculation and circulating cells in vivo with a custom-built 3D intravital lung microscopic imaging system. They found a type of leukocyte called neutrophils aggregate inside the capillaries during sepsis-induced acute lung injury (ALI), leading to disturbances and dead space in blood microcirculation. According to the researchers, this phenomenon is responsible for tissue hypoxia causing lung damage in the sepsis model, and mitigating neutrophils improves microcirculation as well as hypoxia. The lungs are responsible for exchanging oxygen with carbon dioxide gases during the breathing process, providing an essential function for sustaining life. This gas exchange occurs in the alveoli, each surrounded by many capillaries containing the circulating red blood cells. Researchers have been making efforts to observe microcirculation in alveoli, but it has been technically challenging to capture high-resolution images of capillaries and red blood cells inside the lungs that are in constant breathing motion. Professor Pilhan Kim from the Graduate School of Medical Science and Engineering and his team developed an ultra-fast laser scanning confocal microscope and an imaging chamber that could minimize the movement of a lung while preserving its respiratory state. They used this technology to successfully capture red blood cell circulation inside the capillaries of animal models with sepsis. During the process, they found that hypoxia was induced by the increase of dead space inside the lungs of a sepsis model, a space where red blood cells do not circulate. This phenomenon is due to the neutrophils aggregating and trapping inside the capillaries and the arterioles. It was also shown that trapped neutrophils damage the lung tissue in the sepsis model by inhibiting microcirculation as well as releasing reactive oxygen species. Further studies showed that the aggregated neutrophils inside pulmonary vessels exhibit a higher expression of the Mac-1 receptor (CD11b/CD18), which is a receptor involved in intercellular adhesion, compared to the neutrophils that normally circulate. Additionally, they confirmed that Mac-1 inhibitors can improve inhibited microcirculation, ameliorate hypoxia, while reducing pulmonary edema in the sepsis model. Their high-resolution 3D intravital microscope technology allows the real-time imaging of living cells inside the lungs. This work is expected to be used in research on various lung diseases, including sepsis. The research team’s pulmonary circulation imaging and precise analytical techniques will be used as the base technology for developing new diagnostic technologies, evaluating new therapeutic agents for various diseases related to microcirculation. Professor Kim said, “In the ALI model, the inhibition of pulmonary microcirculation occurs due to neutrophils. By controlling this effect and improving microcirculation, it is possible to eliminate hypoxia and pulmonary edema – a new, effective strategy for treating patients with sepsis.” Their 3D intravital microscope technology was commercialized through IVIM Technology, Inc., which is a faculty startup at KAIST. They released an all-in-one intravital microscope model called ‘IVM-CM’ and ‘IVM-C’. This next-generation imaging equipment for basic biomedical research on the complex pathophysiology of various human diseases will play a crucial role in the future global bio-health market. This research, led by Dr. Inwon Park from the Department of Emergency Medicine at Seoul National University Bundang Hospital and formally the Graduate School of Medical Science and Engineering at KAIST, was published in the European Respiratory Journal (2019, 53:1800736) on March 28, 2019. Figure 1. Custom-built high-speed real-time intravital microscope platform Figure 2. Illustrative schematic and photo of a 3D intravital lung microscopic imaging system Figure 3. Aggregation of neutrophils and consequent flow disturbance in pulmonary arteriole in sepsis-induced lung injury

-

Five Biomarkers for Overcoming Colorectal Cancer D..

< Professor Kwang-Hyun Cho's Team > KAIST researchers have identified five biomarkers that will help them address resistance to cancer-targeting therapeutics. This new treatment strategy will bring us one step closer to precision medicine for patients who showed resistance. Colorectal cancer is one of the most common types of cancer worldwide. The number of patients has surpassed 1 million, and its five-year survival rate significantly drops to about 20 percent when metastasized. In Korea, the surge of colorectal cancer has been the highest in the last 10 years due to increasing Westernized dietary patterns and obesity. It is expected that the number and mortality rates of colorectal cancer patients will increase sharply as the nation is rapidly facing an increase in its aging population. Recently, anticancer agents targeting only specific molecules of colon cancer cells have been developed. Unlike conventional anticancer medications, these selectively treat only specific target factors, so they can significantly reduce some of the side-effects of anticancer therapy while enhancing drug efficacy. Cetuximab is the most well-known FDA approved anticancer medication. It is a biomarker that predicts drug reactivity and utilizes the presence of the ‘KRAS’ gene mutation. Cetuximab is prescribed to patients who don’t carry the KRAS gene mutation. However, even in patients without the KRAS gene mutation, the response rate of Cetuximab is only about fifty percent, and there is also resistance to drugs after targeted chemotherapy. Compared with conventional chemotherapy alone, the life expectancy only lasts five months on average. In research featured in the FEBS Journal as the cover paper for the April 7 edition, the KAIST research team led by Professor Kwang-Hyun Cho at the Department of Bio and Brain Engineering presented five additional biomarkers that could increase Cetuximab responsiveness using systems biology approach that combines genomic data analysis, mathematical modeling, and cell experiments. The experimental inhibition of newly discovered biomarkers DUSP4, ETV5, GNB5, NT5E, and PHLDA1 in colorectal cancer cells has been shown to overcome Cetuximab resistance in KRAS-normal genes. The research team confirmed that when suppressing GNB5, one of the new biomarkers, it was shown to overcome resistance to Cetuximab regardless of having a mutation in the KRAS gene. Professor Cho said, “There has not been an example of colorectal cancer treatment involving regulation of the GNB5 gene.” He continued, “Identifying the principle of drug resistance in cancer cells through systems biology and discovering new biomarkers that could be a new molecular target to overcome drug resistance suggest real potential to actualize precision medicine.” This study was supported by the National Research Foundation of Korea (NRF) and funded by the Ministry of Science and ICT (2017R1A2A1A17069642 and 2015M3A9A7067220). Image 1. The cover of FEBS Journal for April 2019

-

Nanomaterials Mimicking Natural Enzymes with Super..

(Professor Jinwoo Lee from the Department of Chemical and Biomolecular Engineering) A KAIST research team doped nitrogen and boron into graphene to selectively increase peroxidase-like activity and succeeded in synthesizing a peroxidase-mimicking nanozyme with a low cost and superior catalytic activity. These nanomaterials can be applied for early diagnosis of Alzheimer’s disease. Enzymes are the main catalysts in our body and are widely used in bioassays. In particular, peroxidase, which oxidizes transparent colorimetric substrates to become a colored product in the presence of hydrogen peroxide, is the most common enzyme that is used in colorimetric bioassays. However, natural enzymes consisting of proteins are unstable against temperature and pH, hard to synthesize, and costly. Nanozymes, on the other hand, do not consist of proteins, meaning the disadvantages of enzymes can be overcome with their robustness and high productivity. In contrast, most nanonzymes do not have selectivity; for example, peroxidase-mimicking nanozymes demonstrate oxidase-like activity that oxidizes colorimetric substrates in the absence of hydrogen peroxide, which keeps them away from precisely detecting the target materials, such as hydrogen peroxide. Professor Jinwoo Lee from the Department of Chemical and Biomolecular Engineering and his team were able to synthesize a peroxidase-mimicking nanozyme with superior catalytic activity and selectivity toward hydrogen peroxide. Co-doping of nitrogen and boron into graphene, which has negligible peroxidase-like activity, selectively increased the peroxidase-like activity without oxidase-like activity to accurately mimic the nature peroxidase and has become a powerful candidate to replace the peroxidase. The experimental results were also verified with computational chemistry. The nitrogen and boron co-doped graphene was also applied to the colorimetric detection of acetylcholine, which is an important neurotransmitter and successfully detected the acetylcholine even better than the nature peroxidase. Professor Lee said, “We began to study nanozymes due to their potential for replacing existing enzymes. Through this study, we have secured core technologies to synthesize nanozymes that have high enzyme activity along with selectivity. We believe that they can be applied to effectively detect acetylcholine for quickly diagnosing Alzheimer’s disease. This research, led by PhD Min Su Kim, was published in ACS Nano (10.1021/acsnano.8b09519) on March 25, 2019. Figure 1. Comparison of the catalytic activities of various nanozymes and horseradish peroxidase (HRP) toward TMB and H₂O₂ Figure 2. Schematic illustration of NB-rGO Reactions in Bioassays

-

KAIST Unveils the Hidden Control Architecture of B..

(Professor Kwang-Hyun Cho and his team) A KAIST research team identified the intrinsic control architecture of brain networks. The control properties will contribute to providing a fundamental basis for the exogenous control of brain networks and, therefore, has broad implications in cognitive and clinical neuroscience. Although efficiency and robustness are often regarded as having a trade-off relationship, the human brain usually exhibits both attributes when it performs complex cognitive functions. Such optimality must be rooted in a specific coordinated control of interconnected brain regions, but the understanding of the intrinsic control architecture of brain networks is lacking. Professor Kwang-Hyun Cho from the Department of Bio and Brain Engineering and his team investigated the intrinsic control architecture of brain networks. They employed an interdisciplinary approach that spans connectomics, neuroscience, control engineering, network science, and systems biology to examine the structural brain networks of various species and compared them with the control architecture of other biological networks, as well as man-made ones, such as social, infrastructural and technological networks. In particular, the team reconstructed the structural brain networks of 100 healthy human adults by performing brain parcellation and tractography with structural and diffusion imaging data obtained from the Human Connectome Project database of the US National Institutes of Health. The team developed a framework for analyzing the control architecture of brain networks based on the minimum dominating set (MDSet), which refers to a minimal subset of nodes (MD-nodes) that control the remaining nodes with a one-step direct interaction. MD-nodes play a crucial role in various complex networks including biomolecular networks, but they have not been investigated in brain networks. By exploring and comparing the structural principles underlying the composition of MDSets of various complex networks, the team delineated their distinct control architectures. Interestingly, the team found that the proportion of MDSets in brain networks is remarkably small compared to those of other complex networks. This finding implies that brain networks may have been optimized for minimizing the cost required for controlling networks. Furthermore, the team found that the MDSets of brain networks are not solely determined by the degree of nodes, but rather strategically placed to form a particular control architecture. Consequently, the team revealed the hidden control architecture of brain networks, namely, the distributed and overlapping control architecture that is distinct from other complex networks. The team found that such a particular control architecture brings about robustness against targeted attacks (i.e., preferential attacks on high-degree nodes) which might be a fundamental basis of robust brain functions against preferential damage of high-degree nodes (i.e., brain regions). Moreover, the team found that the particular control architecture of brain networks also enables high efficiency in switching from one network state, defined by a set of node activities, to another – a capability that is crucial for traversing diverse cognitive states. Professor Cho said, “This study is the first attempt to make a quantitative comparison between brain networks and other real-world complex networks. Understanding of intrinsic control architecture underlying brain networks may enable the development of optimal interventions for therapeutic purposes or cognitive enhancement.” This research, led by Byeongwook Lee, Uiryong Kang and Hongjun Chang, was published in iScience (10.1016/j.isci.2019.02.017) on March 29, 2019. Figure 1. Schematic of identification of control architecture of brain networks. Figure 2. Identified control architectures of brain networks and other real-world complex networks.

-

On-chip Drug Screening for Identifying Antibiotic ..

(from left: Seunggyu Kimand Professor Jessie Sungyun Jeon) A KAIST research team developed a microfluidic-based drug screening chip that identifies synergistic interactions between two antibiotics in eight hours. This chip can be a cell-based drug screening platform for exploring critical pharmacological patterns of antibiotic interactions, along with potential applications in screening other cell-type agents and guidance for clinical therapies. Antibiotic susceptibility testing, which determines types and doses of antibiotics that can effectively inhibit bacterial growth, has become more critical in recent years with the emergence of antibiotic-resistant pathogenic bacteria strains. To overcome the antibiotic-resistant bacteria, combinatory therapy using two or more kinds of antibiotics has been gaining considerable attention. However, the major problem is that this therapy is not always effective; occasionally, unfavorable antibiotic pairs may worsen results, leading to suppressed antimicrobial effects. Therefore, combinatory testing is a crucial preliminary process to find suitable antibiotic pairs and their concentration range against unknown pathogens, but the conventional testing methods are inconvenient for concentration dilution and sample preparation, and they take more than 24 hours to produce the results. To reduce time and enhance the efficiency of combinatory testing, Professor Jessie Sungyun Jeon from the Department of Mechanical Engineering, in collaboration with Professor Hyun Jung Chung from the Department of Biological Sciences, developed a high-throughput drug screening chip that generates 121 pairwise concentrations between two antibiotics. The team utilized a microfluidic chip with a sample volume of a few tens of microliters. This chip enabled 121 pairwise concentrations of two antibiotics to be automatically formed in only 35 minutes. They loaded a mixture of bacterial samples and agarose into the microchannel and injected reagents with or without antibiotics into the surrounding microchannel. The diffusion of antibiotic molecules from the channel with antibiotics to the one without antibiotics resulted in the formation of two orthogonal concentration gradients of the two antibiotics on the bacteria-trapping agarose gel. The team observed the inhibition of bacterial growth by the antibiotic orthogonal gradients over six hours with a microscope, and confirmed different patterns of antibiotic pairs, classifying the interaction types into either synergy or antagonism. Professor Jeon said, “The feasibility of microfluidic-based drug screening chips is promising, and we expect our microfluidic chip to be commercialized and utilized in near future.” This study, led by Seunggyu Kim, was published in Lab on a Chip (10.1039/c8lc01406j) on March 21, 2019. Figure 1. Back cover image for the “Lab on a Chip”. Figure 2. Examples of testing results using the microfluidic chips developed in this research.

-

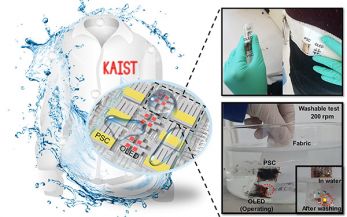

True-meaning Wearable Displays: Self-powered, Wash..

(Video: The washing process of wearing display module) When we think about clothes, they are usually formed with textiles and have to be both wearable and washable for daily use; however, smart clothing has had a problem with its power sources and moisture permeability, which causes the devices to malfunction. This problem has now been overcome by a KAIST research team, who developed a textile-based wearable display module technology that is washable and does not require an external power source. To ease out the problem of external power sources and enhance the practicability of wearable displays, Professor Kyung Cheol Choi from the School of Electrical Engineering and his team fabricated their wearing display modules on real textiles that integrated polymer solar cells (PSCs) with organic light emitting diodes (OLEDs). PSCs have been one of the most promising candidates for a next-generation power source, especially for wearable and optoelectronic applications because they can provide stable power without an external power source, while OLEDs can be driven with milliwatts. However, the problem was that they are both very vulnerable to external moisture and oxygen. The encapsulation barrier is essential for their reliability. The conventional encapsulation barrier is sufficient for normal environments; however, it loses its characteristics in aqueous environments, such as water. It limits the commercialization of wearing displays that must operate even on rainy days or after washing. To tackle this issue, the team employed a washable encapsulation barrier that can protect the device without losing its characteristics after washing through atomic layer deposition (ALD) and spin coating. With this encapsulation technology, the team confirmed that textile-based wearing display modules including PSCs, OLEDs, and the proposed encapsulation barrier exhibited little change in characteristics even after 20 washings with 10-minute cycles. Moreover, the encapsulated device operated stably with a low curvature radius of 3mm and boasted high reliability. Finally, it exhibited no deterioration in properties over 30 days even after being subjected to both bending stress and washing. Since it uses a less stressful textile, compared to conventional wearable electronic devices that use traditional plastic substrates, this technology can accelerate the commercialization of wearing electronic devices. Importantly, this wearable electronic device in daily life can save energy through a self-powered system. Professor Choi said, “I could say that this research realized a truly washable wearable electronic module in the sense that it uses daily wearable textiles instead of the plastic used in conventional wearable electronic devices. Saving energy with PSCs, it can be self-powered, using nature-friendly solar energy, and washed. I believe that it has paved the way for a ‘true-meaning wearable display’ that can be formed on textile, beyond the attachable form of wearable technology.” This research, in collaboration with Professor Seok Ho Cho from Chonnam National University and led by Eun Gyo Jeong, was published in Energy and Environmental Science (10.1039/c8ee03271h) on January 18, 2019. Figure 1. Schematic and photo of a washable wearing display module Figure 2. Cover page of Energy and Environmental Science

-

Wafer-scale multilayer fabrication of silk fibroin..

A KAIST research team developed a novel fabrication method for the multilayer processing of silk-based microelectronics. This technology for creating a biodegradable silk fibroin film allows microfabrication with polymer or metal structures manufactured from photolithography. It can be a key technology in the implementation of silk fibroin-based biodegradable electronic devices or localized drug delivery through silk fibroin patterns. Silk fibroins are biocompatible, biodegradable, transparent, and flexible, which makes them excellent candidates for implantable biomedical devices, and they have also been used as biodegradable films and functional microstructures in biomedical applications. However, conventional microfabrication processes require strong etching solutions and solvents to modify the structure of silk fibroins. To prevent the silk fibroin from being damaged during the process, Professor Hyunjoo J. Lee from the School of Electrical Engineering and her team came up with a novel process, named aluminum hard mask on silk fibroin (AMoS), which is capable of micropatterning multiple layers composed of both fibroin and inorganic materials, such as metal and dielectrics with high-precision microscale alignment. The AMoS process can make silk fibroin patterns on devices, or make patterns on silk fibroin thin films with other materials by using photolithography, which is a core technology in the current microfabrication process. The team successfully cultured primary neurons on the processed silk fibroin micro-patterns, and confirmed that silk fibroin has excellent biocompatibility before and after the fabrication process and that it also can be applied to implanted biological devices. Through this technology, the team realized the multilayer micropatterning of fibroin films on a silk fibroin substrate and fabricated a biodegradable microelectric circuit consisting of resistors and silk fibroin dielectric capacitors in a silicon wafer with large areas. They also used this technology to position the micro-pattern of the silk fibroin thin film closer to the flexible polymer-based brain electrode, and confirmed the dye molecules mounted on the silk fibroin were transferred successfully from the micropatterns. Professor Lee said, “This technology facilitates wafer-scale, large-area processing of sensitive materials. We expect it to be applied to a wide range of biomedical devices in the future. Using the silk fibroin with micro-patterned brain electrodes can open up many new possibilities in research on brain circuits by mounting drugs that restrict or promote brain cell activities.” This research, in collaboration with Dr. Nakwon Choi from KIST and led by PhD candidate Geon Kook, was published in ACS AMI (10.1021/acsami.8b13170) on January 16, 2019. Figure 1. The cover page of ACS AMI Figure 2. Fibroin microstructures and metal patterns on a fibroin produced by using the AMoS mask. Figure 3. Biocompatibility assessment of the AMoS Process. Top: Schematics image of a) fibroin-coated silicon b) fibroin-pattered silicon and c) gold-patterned fibroin. Bottom: Representative confocal microscopy images of live (green) and dead (red) primary cortical neurons cultured on the substrates.

-

Novel Material Properties of Hybrid Perovskite Nan..

(from left: Juho Lee, Dr. Muhammad Ejaz Khan and Professor Yong-Hoon Kim) A KAIST research team reported a novel non-linear device with the founding property coming from perovskite nanowires. They showed that hybrid perovskite-derived, inorganic-framework nanowires can acquire semi-metallicity, and proposed negative differential resistance (NDR) devices with excellent NDR characteristics that resulted from a novel quantum-hybridization NDR mechanism, implying the potential of perovskite nanowires to be realized in next-generation electronic devices. Organic-inorganic hybrid halide perovskites have recently emerged as prominent candidates for photonic applications due to their excellent optoelectronic properties as well as their low cost and facile synthesis processes. Prominent progresses have been already made for devices including solar cells, light-emitting diodes, lasers and photodetectors. However, research on electronic devices based on hybrid halide perovskites has not been actively pursued compared with their photonic device counterparts. Professor Yong-Hoon Kim from the School of Electrical Engineering and his team took a closer look at low-dimensional organic-inorganic halide perovskite materials, which have enhanced quantum confinement effects, and particularly focused on the recently synthesized trimethylsulfonium (TMS) lead triiodide (CH3)3SPbI3. Using supercomputer simulations, the team first showed that stripping the (CH3)3S or TMS organic ligands from the TMS PbI3 perovskite nanowires results in semi-metallic PbI3 columns, which contradicts the conventional assumption of the semiconducting or insulating characteristics of the inorganic perovskite framework. Utilizing the semi-metallic PbI3 inorganic framework as the electrode, the team designed a tunneling junction device from perovskite nanowires and found that they exhibit excellent nonlinear negative differential resistance (NDR) behavior. The NDR property is a key to realizing next-generation, ultra-low-power, and multivalued non-linear devices. Furthermore, the team found that this NDR originates from a novel mechanism that involves the quantum-mechanical hybridization between channel and electrode states. Professor Kim said, “This research demonstrates the potential of quantum mechanics-based computer simulations to lead developments in advanced nanomaterials and nanodevices. In particular, this research proposes a new direction in the development of a quantum mechanical tunneling device, which was the topic for which the Nobel Laureate in Physics in 1973 was awarded to Dr. Leo Esaki. This research, led by Dr. Muhammad Ejaz Khan and PhD candidate Juho Lee, was published online in Advanced Functional Materials (10.1002/adfm.201807620) on January 7, 2019. Figure. The draft version of the cover page of 'Advanced Functional Materials'

-

Kimchi Toolkit by Costa Rican Summa Cum Laude Help..

(Maria Jose Reyes Castro with her kimchi toolkit application) Every graduate feels a special attachment to their school, but for Maria Jose Reyes Castro who graduated summa cum laude in the Department of Industrial Design this year, KAIST will be remembered for more than just academics. She appreciates KAIST for not only giving her great professional opportunities, but also helping her find the love of her life. During her master’s course, she completed an electronic kimchi toolkit, which optimizes kimchi’s flavor. Her kit uses a mobile application and smart sensor to find the fermentation level of kimchi by measuring its pH level, which is closely related to its fermentation. A user can set a desired fermentation level or salinity on the mobile application, and it provides the best date to serve it. Under the guidance of Professor Daniel Saakes, she conducted research on developing a kimchi toolkit for beginners (Qualified Kimchi: Improving the experience of inexperienced kimchi makers by developing a monitoring toolkit for kimchi). “I’ve seen many foreigners saying it’s quite difficult to make kimchi. So I chose to study kimchi to help people, especially those who are first-experienced making kimchi more easily,” she said. She got recipes from YouTube and studied fermentation through academic journals. She also asked kimchi experts to have a more profound understanding of it. Extending her studies, she now works for a startup specializing in smart farms after starting last month. She conducts research on biology and applies it to designs that can be used practically in daily life. Her tie with KAIST goes back to 2011 when she attended an international science camp in Germany. She met Sunghan Ro (’19 PhD in Nanoscience and Technology), a student from KAIST and now her husband. He recommended for her to enroll at KAIST because the school offers an outstanding education and research infrastructure along with support for foreign students. At that time, Castro had just begun her first semester in electrical engineering at the University of Costa Rica, but she decided to apply to KAIST and seek a better opportunity in a new environment. One year later, she began her fresh start at KAIST in the fall semester of 2012. Instead of choosing her original major, electrical engineering, she decided to pursue her studies in the Department of Industrial Design, because it is an interdisciplinary field where students get to study design while learning business models and making prototypes. She said, “I felt encouraged by my professors and colleagues in my department to be creative and follow my passion. I never regret entering this major.” When Castro was pursuing her master’s program in the same department, she became interested in interaction designs with food and biological designs by Professor Saakes, who is her advisor specializing in these areas. After years of following her passion in design, she now graduates with academic honors in her department. It is a bittersweet moment to close her journey at KAIST, but “I want to thank KAIST for the opportunity to change my life for the better. I also thank my parents for being supportive and encouraging me. I really appreciate the professors from the Department of Industrial Design who guided and shaped who I am,” she said. Figure 1. The concept of the kimchi toolkit Figure 2. The scenario of the kimchi toolkit

-

KAIST Develops Analog Memristive Synapses for Neur..

(Professor Sung-Yool Choi from the School of Electrical Engineering) A KAIST research team developed a technology that makes a transition of the operation mode of flexible memristors to synaptic analog switching by reducing the size of the formed filament. Through this technology, memristors can extend their role to memristive synapses for neuromorphic chips, which will lead to developing soft neuromorphic intelligent systems. Brain-inspired neuromorphic chips have been gaining a great deal of attention for reducing the power consumption and integrating data processing, compared to conventional semiconductor chips. Similarly, memristors are known to be the most suitable candidate for making a crossbar array which is the most efficient architecture for realizing hardware-based artificial neural network (ANN) inside a neuromorphic chip. A hardware-based ANN consists of a neuron circuit and synapse elements, the connecting pieces. In the neuromorphic system, the synaptic weight, which represents the connection strength between neurons, should be stored and updated as the type of analog data at each synapse. However, most memristors have digital characteristics suitable for nonvolatile memory. These characteristics put a limitation on the analog operation of the memristors, which makes it difficult to apply them to synaptic devices. Professor Sung-Yool Choi from the School of Electrical Engineering and his team fabricated a flexible polymer memristor on a plastic substrate, and found that changing the size of the conductive metal filaments formed inside the device on the scale of metal atoms can make a transition of the memristor behavior from digital to analog. Using this phenomenon, the team developed flexible memristor-based electronic synapses, which can continuously and linearly update synaptic weight, and operate under mechanical deformations such as bending. The team confirmed that the ANN based on these memristor synapses can effectively classify person’s facial images even when they were damaged. This research demonstrated the possibility of a neuromorphic chip that can efficiently recognize faces, numbers, and objects. Professor Choi said, “We found the principles underlying the transition from digital to analog operation of the memristors. I believe that this research paves the way for applying various memristors to either digital memory or electronic synapses, and will accelerate the development of a high-performing neuromorphic chip.” In a joint research project with Professor Sung Gap Im (KAIST) and Professor V. P. Dravid (Northwestern University), this study was led by Dr. Byung Chul Jang (Samsung Electronics), Dr. Sungkyu Kim (Northwestern University) and Dr. Sang Yoon Yang (KAIST), and was published online in Nano Letters on January 4, 2019. Figure 1. a) Schematic illustration of a flexible pV3D3 memristor-based electronic synapse array. b) Cross-sectional TEM image of the flexible pV3D3 memristor

-

New LSB with Theoretical Capacity over 90%

(Professor Hee-Tak Kim and Hyunwon Chu) A KAIST research team has developed a lithium sulfur battery (LSB) that realizes 92% of the theoretical capacity and an areal capacity of 4mAh/cm2. LSBs are gaining a great deal of attention as an alternative for lithium ion batteries (LIBs) because they have a theoretical energy density up to six to seven times higher than that of LIBs, and can be manufactured in a more cost-effective way. However, LSBs face the obstacle of having a capacity reaching its theoretical maximum because they are prone to uncontrolled growth of lithium sulfide on the electrodes, which leads to blocking electron transfer. To address the problem of electrode passivation, researchers introduced additional conductive agent into the electrode; however, it drastically lowered the energy density of LSBs, making it difficult to exceed 70% of the theoretical capacity. Professor Hee-Tak Kim from the Department of Chemical and Biomolecular Engineering and his team replaced the lithium salt anions used in conventional LSB electrolytes with anions with a high donor number. The team successfully induced the three-dimensional growth of lithium sulfide on electrode surfaces and efficiently delayed the electrode passivation. Based on this electrolyte design, the research team achieved 92% of the theoretical capacity with their high-capacity sulfur electrode (4mAh/cm2), which is equivalent to that of conventional LIB cathode. Furthermore, they were able to form a stable passivation film on the surface of the lithium anode and exhibited stable operation over 100 cycles. This technology, which can be flexibly used with various types of sulfur electrodes, can mark a new milestone in the battery industry. Professor Kim said, “We proposed a new physiochemical principle to overcome the limitations of conventional LSBs. I believe our achievement of obtaining 90% of the LBSs’ theoretical capacity without any capacity loss after 100 cycles will become a new milestone.” This research, first-authored by Hyunwon Chu and Hyungjun Noh, was published in Nature Communications on January 14, 2019. It was also selected in the editor’s highlight for its outstanding achievements. Figure 1. Lithium sulfur growth and its deposition mechanism for different sulfide growth behaviors Figure 2. Capacity and cycle life characteristics of the LSBs