Kaist

Korean

News

College of Engineering News

-

Team KAIST placed among top two at MBZIRC Maritime..

Representing Korean Robotics at Sea: KAIST’s 26-month strife rewarded Team KAIST placed among top two at MBZIRC Maritime Grand Challenge - Team KAIST, composed of students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical and Engineering, came through the challenge as the first runner-up winning the prize money totaling up to $650,000 (KRW 860 million). - Successfully led the autonomous collaboration of unmanned aerial and maritime vehicles using cutting-edge robotics and AI technology through to the final round of the competition held in Abu Dhabi from January 10 to February 6, 2024. KAIST (President Kwang-Hyung Lee), reported on the 8th that Team KAIST, led by students from the labs of Professor Jinwhan Kim of the Department of Mechanical Engineering and Professor Hyunchul Shim of the School of Electrical Engineering, with Pablo Aviation as a partner, won a total prize money of $650,000 (KRW 860 million) at the Maritime Grand Challenge by the Mohamed Bin Zayed International Robotics Challenge (MBZIRC), finishing first runner-up. This competition, which is the largest ever robotics competition held over water, is sponsored by the government of the United Arab Emirates and organized by ASPIRE, an organization under the Abu Dhabi Ministry of Science, with a total prize money of $3 million. In the competition, which started at the end of 2021, 52 teams from around the world participated and five teams were selected to go on to the finals in February 2023 after going through the first and second stages of screening. The final round was held from January 10 to February 6, 2024, using actual unmanned ships and drones in a secluded sea area of 10 km2 off the coast of Abu Dhabi, the capital of the United Arab Emirates. A total of 18 KAIST students and Professor Jinwhan Kim and Professor Hyunchul Shim took part in this competition at the location at Abu Dhabi. Team KAIST will receive $500,000 in prize money for taking second place in the final, and the team’s prize money totals up to $650,000 including $150,000 that was as special midterm award for finalists. The final mission scenario is to find the target vessel on the run carrying illegal cargoes among many ships moving within the GPS-disabled marine surface, and inspect the deck for two different types of stolen cargo to recover them using the aerial vehicle to bring the small cargo and the robot manipulator topped on an unmanned ship to retrieve the larger one. The true aim of the mission is to complete it through autonomous collaboration of the unmanned ship and the aerial vehicle without human intervention throughout the entire mission process. In particular, since GPS cannot be used in this competition due to regulations, Professor Jinwhan Kim's research team developed autonomous operation techniques for unmanned ships, including searching and navigating methods using maritime radar, and Professor Hyunchul Shim's research team developed video-based navigation and a technology to combine a small autonomous robot with a drone. The final mission is to retrieve cargo on board a ship fleeing at sea through autonomous collaboration between unmanned ships and unmanned aerial vehicles without human intervention. The overall mission consists the first stage of conducting the inspection to find the target ship among several ships moving at sea and the second stage of conducting the intervention mission to retrieve the cargoes on the deck of the ship. Each team was given a total of three opportunities, and the team that completed the highest-level mission in the shortest time during the three attempts received the highest score. In the first attempt, KAIST was the only team to succeed in the first stage search mission, but the competition began in earnest as the Croatian team also completed the first stage mission in the second attempt. As the competition schedule was delayed due to strong winds and high waves that continued for several days, the organizers decided to hold the finals with the three teams, including the Team KAIST and the team from Croatia’s the University of Zagreb, which completed the first stage of the mission, and Team Fly-Eagle, a team of researcher from China and UAE that partially completed the first stage. The three teams were given the chance to proceed to the finals and try for the third attempt, and in the final competition, the Croatian team won, KAIST took the second place, and the combined team of UAE-China combined team took the third place. The final prize to be given for the winning team is set at $2 million with $500,000 for the runner-up team, and $250,000 for the third-place. Professor Jinwhan Kim of the Department of Mechanical Engineering, who served as the advisor for Team KAIST, said, “I would like to express my gratitude and congratulations to the students who put in a huge academic and physical efforts in preparing for the competition over the past two years. I feel rewarded because, regardless of the results, every bit of efforts put into this up to this point will become the base of their confidence and a valuable asset in their growth into a great researcher.” Sol Han, a doctoral student in mechanical engineering who served as the team leader, said, “I am disappointed of how narrowly we missed out on winning at the end, but I am satisfied with the significance of the output we’ve got and I am grateful to the team members who worked hard together for that.” HD Hyundai, Rainbow Robotics, Avikus, and FIMS also participated as sponsors for Team KAIST's campaign.

-

KAIST develops an artificial muscle device that pr..

- Professor IlKwon Oh’s research team in KAIST’s Department of Mechanical Engineering developed a soft fluidic switch using an ionic polymer artificial muscle that runs with ultra-low power to lift objects 34 times greater than its weight. - Its light weight and small size make it applicable to various industrial fields such as soft electronics, smart textiles, and biomedical devices by controlling fluid flow with high precision, even in narrow spaces. Soft robots, medical devices, and wearable devices have permeated our daily lives. KAIST researchers have developed a fluid switch using ionic polymer artificial muscles that operates at ultra-low power and produces a force 34 times greater than its weight. Fluid switches control fluid flow, causing the fluid to flow in a specific direction to invoke various movements. KAIST (President Kwang-Hyung Lee) announced on the 4th of January that a research team under Professor IlKwon Oh from the Department of Mechanical Engineering has developed a soft fluidic switch that operates at ultra-low voltage and can be used in narrow spaces. Artificial muscles imitate human muscles and provide flexible and natural movements compared to traditional motors, making them one of the basic elements used in soft robots, medical devices, and wearable devices. These artificial muscles create movements in response to external stimuli such as electricity, air pressure, and temperature changes, and in order to utilize artificial muscles, it is important to control these movements precisely. Switches based on existing motors were difficult to use within limited spaces due to their rigidity and large size. In order to address these issues, the research team developed an electro-ionic soft actuator that can control fluid flow while producing large amounts of force, even in a narrow pipe, and used it as a soft fluidic switch. < Figure 1. The separation of fluid droplets using a soft fluid switch at ultra-low voltage. > The ionic polymer artificial muscle developed by the research team is composed of metal electrodes and ionic polymers, and it generates force and movement in response to electricity. A polysulfonated covalent organic framework (pS-COF) made by combining organic molecules on the surface of the artificial muscle electrode was used to generate an impressive amount of force relative to its weight with ultra-low power (~0.01V). As a result, the artificial muscle, which was manufactured to be as thin as a hair with a thickness of 180 µm, produced a force more than 34 times greater than its light weight of 10 mg to initiate smooth movement. Through this, the research team was able to precisely control the direction of fluid flow with low power. < Figure 2. The synthesis and use of pS-COF as a common electrode-electrolyte host for electroactive soft fluid switches. A) The synthesis schematic of pS-COF. B) The schematic diagram of the operating principle of the electrochemical soft switch. C) The schematic diagram of using a pS-COF-based electrochemical soft switch to control fluid flow in dynamic operation. > Professor IlKwon Oh, who led this research, said, “The electrochemical soft fluidic switch that operate at ultra-low power can open up many possibilities in the fields of soft robots, soft electronics, and microfluidics based on fluid control.” He added, “From smart fibers to biomedical devices, this technology has the potential to be immediately put to use in a variety of industrial settings as it can be easily applied to ultra-small electronic systems in our daily lives.” The results of this study, in which Dr. Manmatha Mahato, a research professor in the Department of Mechanical Engineering at KAIST, participated as the first author, were published in the international academic journal Science Advances on December 13, 2023. (Paper title: Polysulfonated Covalent Organic Framework as Active Electrode Host for Mobile Cation Guests in Electrochemical Soft Actuator) This research was conducted with support from the National Research Foundation of Korea's Leader Scientist Support Project (Creative Research Group) and Future Convergence Pioneer Project. * Paper DOI: https://www.science.org/doi/abs/10.1126/sciadv.adk9752

-

A KAIST Research Team Develops High-Performance St..

With the market for wearable electric devices growing rapidly, stretchable solar cells that can function under strain have received considerable attention as an energy source. To build such solar cells, it is necessary that their photoactive layer, which converts light into electricity, shows high electrical performance while possessing mechanical elasticity. However, satisfying both of these two requirements is challenging, making stretchable solar cells difficult to develop. On December 26, a KAIST research team from the Department of Chemical and Biomolecular Engineering (CBE) led by Professor Bumjoon Kim announced the development of a new conductive polymer material that achieved both high electrical performance and elasticity while introducing the world’s highest-performing stretchable organic solar cell. Organic solar cells are devices whose photoactive layer, which is responsible for the conversion of light into electricity, is composed of organic materials. Compared to existing non-organic material-based solar cells, they are lighter and flexible, making them highly applicable for wearable electrical devices. Solar cells as an energy source are particularly important for building electrical devices, but high-efficiency solar cells often lack flexibility, and their application in wearable devices have therefore been limited to this point. The team led by Professor Kim conjugated a highly stretchable polymer to an electrically conductive polymer with excellent electrical properties through chemical bonding, and developed a new conductive polymer with both electrical conductivity and mechanical stretchability. This polymer meets the highest reported level of photovoltaic conversion efficiency (19%) using organic solar cells, while also showing 10 times the stretchability of existing devices. The team thereby built the world’s highest performing stretchable solar cell that can be stretched up to 40% during operation, and demonstrated its applicability for wearable devices. < Figure 1. Chemical structure of the newly developed conductive polymer and performance of stretchable organic solar cells using the material. > Professor Kim said, “Through this research, we not only developed the world’s best performing stretchable organic solar cell, but it is also significant that we developed a new polymer that can be applicable as a base material for various electronic devices that needs to be malleable and/or elastic.” < Figure 2. Photovoltaic efficiency and mechanical stretchability of newly developed polymers compared to existing polymers. > This research, conducted by KAIST researchers Jin-Woo Lee and Heung-Goo Lee as first co-authors in cooperation with teams led by Professor Taek-Soo Kim from the Department of Mechanical Engineering and Professor Sheng Li from the Department of CBE, was published in Joule on December 1 (Paper Title: Rigid and Soft Block-Copolymerized Conjugated Polymers Enable High-Performance Intrinsically-Stretchable Organic Solar Cells). This research was supported by the National Research Foundation of Korea.

-

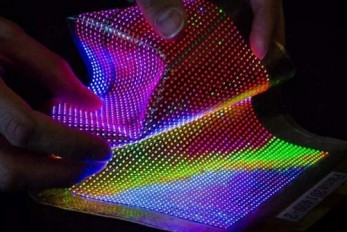

A KAIST Team Develops Selective Transfer Printing ..

- A KAIST research team led by Professor Keon Jae Lee demonstrates the transfer printing of a large number of micro-sized inorganic semiconductor chips via the selective modulation of micro-vacuum force. MicroLEDs are a light source for next-generation displays that utilize inorganic LED chips with a size of less than 100 μm. MicroLEDs have attracted a great deal of attention due to their superior electrical/optical properties, reliability, and stability compared to conventional displays such as LCD, OLED, and QD. To commercialize microLEDs, transfer printing technology is essential for rearranging microLED dies from a growth substrate onto the final substrate with a desired layout and precise alignment. However, previous transfer methods still have many challenges such as the need for additional adhesives, misalignment, low transfer yield, and chip damage. Professor Lee’s research team has developed a micro-vacuum assisted selective transfer printing (µVAST) technology to transfer a large number of microLED chips by adjusting the micro-vacuum suction force. The key technology relies on a laser-induced etching (LIE) method for forming 20 μm-sized micro-hole arrays with a high aspect ratio on glass substrates at fabrication speed of up to 7,000 holes per second. The LIE-drilled glass is connected to the vacuum channels, controlling the micro-vacuum force at desired hole arrays to selectively pick up and release the microLEDs. The micro-vacuum assisted transfer printing accomplishes a higher adhesion switchability compared to previous transfer methods, enabling the assembly of micro-sized semiconductors with various heterogeneous materials, sizes, shapes, and thicknesses onto arbitrary substrates with high transfer yields. < Figure 01. Concept of micro-vacuum assisted selective transfer printing (μVAST). > Professor Keon Jae Lee said, “The micro-vacuum assisted transfer provides an interesting tool for large-scale, selective integration of microscale high-performance inorganic semiconductors. Currently, we are investigating the transfer printing of commercial microLED chips with an ejector system for commercializing next-generation displays (Large screen TVs, flexible/stretchable devices) and wearable phototherapy patches.” This result titled “Universal selective transfer printing via micro-vacuum force” was published in Nature Communications on November 26th, 2023. (DOI: 10.1038/S41467-023-43342-8) < Figure 02. Universal transfer printing of thin-film semiconductors via μVAST. > < Figure 03. Flexible devices fabricated by μVAST. > Title: Entire process including LIE and µVAST Vimeo link: https://vimeo.com/894430416?share=copy

-

KAIST presents strategies for environmentally frie..

- Provides current research trends in bio-based polyamide production - Research on bio-based polyamides production gains importance for achieving a carbon-neutral society Global industries focused on carbon neutrality, under the slogan "Net-Zero," are gaining increasing attention. In particular, research on microbial production of polymers, replacing traditional chemical methods with biological approaches, is actively progressing. Polyamides, represented by nylon, are linear polymers widely used in various industries such as automotive, electronics, textiles, and medical fields. They possess beneficial properties such as high tensile strength, electrical insulation, heat resistance, wear resistance, and biocompatibility. Since the commercialization of nylon in 1938, approximately 7 million tons of polyamides are produced worldwide annually. Considering their broad applications and significance, producing polyamides through bio-based methods holds considerable environmental and industrial importance. KAIST (President Kwang-Hyung Lee) announced that a research team led by Distinguished Professor Sang Yup Lee, including Dr. Jong An Lee and doctoral candidate Ji Yeon Kim from the Department of Chemical and Biomolecular Engineering, published a paper titled "Current Advancements in Bio-Based Production of Polyamides”. The paper was featured on the cover of the monthly issue of "Trends in Chemistry” by Cell Press. As part of climate change response technologies, bio-refineries involve using biotechnological and chemical methods to produce industrially important chemicals and biofuels from renewable biomass without relying on fossil resources. Notably, systems metabolic engineering, pioneered by KAIST's Distinguished Professor Sang Yup Lee, is a research field that effectively manipulates microbial metabolic pathways to produce valuable chemicals, forming the core technology for bio-refineries. The research team has successfully developed high-performance strains producing a variety of compounds, including succinic acid, biodegradable plastics, biofuels, and natural products, using systems metabolic engineering tools and strategies. The research team predicted that if bio-based polyamide production technology, which is widely used in the production of clothing and textiles, becomes widespread, it will attract attention as a future technology that can respond to the climate crisis due to its environment-friendly production technology. In this study, the research team comprehensively reviewed the bio-based polyamide production strategies. They provided insights into the advancements in polyamide monomer production using metabolically engineered microorganisms and highlighted the recent trends in bio-based polyamide advancements utilizing these monomers. Additionally, they reviewed the strategies for synthesizing bio-based polyamides through chemical conversion of natural oils and discussed the biodegradability and recycling of the polyamides. Furthermore, the paper presented the future direction in which metabolic engineering can be applied for the bio-based polyamide production, contributing to environmentally friendly and sustainable society. Ji Yeon Kim, the co-first author of this paper from KAIST, stated "The importance of utilizing systems metabolic engineering tools and strategies for bio-based polyamides production is becoming increasingly prominent in achieving carbon neutrality." Professor Sang Yup Lee emphasized, "Amid growing concerns about climate change, the significance of environmentally friendly and sustainable industrial development is greater than ever. Systems metabolic engineering is expected to have a significant impact not only on the chemical industry but also in various fields." < [Figure 1] A schematic overview of the overall process for polyamides production > This paper by Dr. Jong An Lee, PhD student Ji Yeon Kim, Dr. Jung Ho Ahn, and Master Yeah-Ji Ahn from the Department of Chemical and Biomolecular Engineering at KAIST was published in the December issue of 'Trends in Chemistry', an authoritative review journal in the field of chemistry published by Cell. It was published on December 7 as the cover paper and featured review. ※ Paper title: Current advancements in the bio-based production of polyamides ※ Author information: Jong An Lee, Ji Yeon Kim, Jung Ho Ahn, Yeah-Ji Ahn, and Sang Yup Lee This research was conducted with the support from the development of platform technologies of microbial cell factories for the next-generation biorefineries project and C1 gas refinery program by Korean Ministry of Science and ICT. < [Figure 2] Cover paper of the December issue of Trends in Chemistry >

-

KAIST introduces eco-friendly technologies for pla..

- A research team under Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering published a paper in Nature Microbiology on the overview and trends of plastic production and degradation technology using microorganisms. - Eco-friendly and sustainable plastic production and degradation technology using microorganisms as a core technology to achieve a plastic circular economy was presented. Plastic is one of the important materials in modern society, with approximately 460 million tons produced annually and with expected production reaching approximately 1.23 billion tons in 2060. However, since 1950, plastic waste totaling more than 6.3 billion tons has been generated, and it is believed that more than 140 million tons of plastic waste has accumulated in the aquatic environment. Recently, the seriousness of microplastic pollution has emerged, not only posing a risk to the marine ecosystem and human health, but also worsening global warming by inhibiting the activity of marine plankton, which play an important role in lowering the Earth's carbon dioxide concentration. KAIST President Kwang-Hyung Lee announced on December 11 that a research team under Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering had published a paper titled 'Sustainable production and degradation of plastics using microbes', which covers the latest technologies for producing plastics and processing waste plastics in an eco-friendly manner using microorganisms. As the international community moves to solve this plastic problem, various efforts are being made, including 175 countries participating to conclude a legally binding agreement with the goal of ending plastic pollution by 2024. Various technologies are being developed for sustainable plastic production and processing, and among them, biotechnology using microorganisms is attracting attention. Microorganisms have the ability to naturally produce or decompose certain compounds, and this ability is maximized through biotechnologies such as metabolic engineering and enzyme engineering to produce plastics from renewable biomass resources instead of fossil raw materials and to decompose waste plastics. Accordingly, the research team comprehensively analyzed the latest microorganism-based technologies for the sustainable production and decomposition of plastics and presented how they actually contribute to solving the plastic problem. Based on this, they presented limitations, prospects, and research directions of the technologies for achieving a circular economy for plastics. Microorganism-based technologies for various plastics range from widely used synthetic plastics such as polyethylene (PE) to promising bioplastics such as natural polymers derived from microorganisms (polyhydroxyalkanoate (PHA)) that are completely biodegradable in the natural environment and do not pose a risk of microplastic generation. Commercialization statuses and latest technologies were also discussed. In addition, the technology to decompose these plastics using microorganisms and their enzymes and the upcycling technology to convert them into other useful compounds after decomposition were introduced, highlighting the competitiveness and potential of technology using microorganisms. First author So Young Choi, a research assistant professor in the Department of Chemical and Biomolecular Engineering at KAIST, said, “In the future, we will be able to easily find eco-friendly plastics made using microorganisms all around us,” and corresponding author Distinguished Professor Sang Yup Lee said, “Plastic can be made more sustainable. It is important to use plastics responsibly to protect the environment and simultaneously achieve economic and social development through the new plastics industry, and we look forward to the improved performance of microbial metabolic engineering technology.” This paper was published on November 30th in the online edition of Nature Microbiology. ※ Paper Title : Sustainable production and degradation of plastics using microbes Authors: So Young Choi, Youngjoon Lee, Hye Eun Yu, In Jin Cho, Minju Kang & Sang Yup Lee < Life cycle of plastics produced using microbial biotechnologies > This research was conducted with the support from the Development of Platform Technologies of Microbial Cell Factories for the Next-Generation Biorefineries Project (2022M3J5A1056117) and the Development of Platform Technology for the Production of Novel Aromatic Bioplastic Using Microbial Cell Factories Project (2022M3J4A1053699) by the Korean Ministry of Science and ICT.

-

KAIST-UCSD researchers build an enzyme discovering..

- A joint research team led by Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering and Bernhard Palsson of UCSD developed ‘DeepECtransformer’, an artificial intelligence that can predict Enzyme Commission (EC) number of proteins. - The AI is tasked to discover new enzymes that have not been discovered yet, which would allow prediction for a total of 5,360 types of Enzyme Commission (EC) numbers - It is expected to be used in the development of microbial cell factories that produce environmentally friendly chemicals as a core technology for analyzing the metabolic network of a genome. While E. coli is one of the most studied organisms, the function of 30% of proteins that make up E. coli has not yet been clearly revealed. For this, an artificial intelligence was used to discover 464 types of enzymes from the proteins that were unknown, and the researchers went on to verify the predictions of 3 types of proteins were successfully identified through in vitro enzyme assay. KAIST (President Kwang-Hyung Lee) announced on the 24th that a joint research team comprised of Gi Bae Kim, Ji Yeon Kim, Dr. Jong An Lee and Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering at KAIST, and Dr. Charles J. Norsigian and Professor Bernhard O. Palsson of the Department of Bioengineering at UCSD has developed DeepECtransformer, an artificial intelligence that can predict the enzyme functions from the protein sequence, and has established a prediction system by utilizing the AI to quickly and accurately identify the enzyme function. Enzymes are proteins that catalyze biological reactions, and identifying the function of each enzyme is essential to understanding the various chemical reactions that exist in living organisms and the metabolic characteristics of those organisms. Enzyme Commission (EC) number is an enzyme function classification system designed by the International Union of Biochemistry and Molecular Biology, and in order to understand the metabolic characteristics of various organisms, it is necessary to develop a technology that can quickly analyze enzymes and EC numbers of the enzymes present in the genome. Various methodologies based on deep learning have been developed to analyze the features of biological sequences, including protein function prediction, but most of them have a problem of a black box, where the inference process of AI cannot be interpreted. Various prediction systems that utilize AI for enzyme function prediction have also been reported, but they do not solve this black box problem, or cannot interpret the reasoning process in fine-grained level (e.g., the level of amino acid residues in the enzyme sequence). The joint team developed DeepECtransformer, an AI that utilizes deep learning and a protein homology analysis module to predict the enzyme function of a given protein sequence. To better understand the features of protein sequences, the transformer architecture, which is commonly used in natural language processing, was additionally used to extract important features about enzyme functions in the context of the entire protein sequence, which enabled the team to accurately predict the EC number of the enzyme. The developed DeepECtransformer can predict a total of 5360 EC numbers. The joint team further analyzed the transformer architecture to understand the inference process of DeepECtransformer, and found that in the inference process, the AI utilizes information on catalytic active sites and/or the cofactor binding sites which are important for enzyme function. By analyzing the black box of DeepECtransformer, it was confirmed that the AI was able to identify the features that are important for enzyme function on its own during the learning process. "By utilizing the prediction system we developed, we were able to predict the functions of enzymes that had not yet been identified and verify them experimentally," said Gi Bae Kim, the first author of the paper. "By using DeepECtransformer to identify previously unknown enzymes in living organisms, we will be able to more accurately analyze various facets involved in the metabolic processes of organisms, such as the enzymes needed to biosynthesize various useful compounds or the enzymes needed to biodegrade plastics." he added. "DeepECtransformer, which quickly and accurately predicts enzyme functions, is a key technology in functional genomics, enabling us to analyze the function of entire enzymes at the systems level," said Professor Sang Yup Lee. He added, “We will be able to use it to develop eco-friendly microbial factories based on comprehensive genome-scale metabolic models, potentially minimizing missing information of metabolism.” The joint team’s work on DeepECtransformer is described in the paper titled "Functional annotation of enzyme-encoding genes using deep learning with transformer layers" written by Gi Bae Kim, Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering of KAIST and their colleagues. The paper was published via peer-review on the 14th of November on “Nature Communications”. This research was conducted with the support by “the Development of next-generation biorefinery platform technologies for leading bio-based chemicals industry project (2022M3J5A1056072)” and by “Development of platform technologies of microbial cell factories for the next-generation biorefineries project (2022M3J5A1056117)” from National Research Foundation supported by the Korean Ministry of Science and ICT (Project Leader: Distinguished Professor Sang Yup Lee, KAIST). < Figure 1. The structure of DeepECtransformer's artificial neural network >

-

An intravenous needle that irreversibly softens vi..

- A joint research team at KAIST developed an intravenous (IV) needle that softens upon insertion, minimizing risk of damage to blood vessels and tissues. - Once used, it remains soft even at room temperature, preventing accidental needle stick injuries and unethical multiple use of needle. - A thin-film temperature sensor can be embedded with this needle, enabling real-time monitoring of the patient's core body temperature, or detection of unintended fluid leakage, during IV medication. Intravenous (IV) injection is a method commonly used in patient’s treatment worldwide as it induces rapid effects and allows treatment through continuous administration of medication by directly injecting drugs into the blood vessel. However, medical IV needles, made of hard materials such as stainless steel or plastic which do not mechanically match the soft biological tissues of the body, can cause critical problems in healthcare settings, starting from minor tissue damages in the injection sites to serious inflammations. The structure and dexterity of rigid medical IV devices also enable unethical reuse of needles for reduction of injection costs, leading to transmission of deadly blood-borne disease infections such as human immunodeficiency virus (HIV) and hepatitis B/C viruses. Furthermore, unintended needlestick injuries are frequently occurring in medical settings worldwide, that are viable sources of such infections, with IV needles having the greatest susceptibility of being the medium of transmissible diseases. For these reasons, the World Health Organization (WHO) in 2015 launched a policy on safe injection practices to encourage the development and use of “smart” syringes that have features to prevent re-use, after a tremendous increase in the number of deadly infectious disease worldwide due to medical-sharps related issues. KAIST announced on the 13th that Professor Jae-Woong Jeong and his research team of its School of Electrical Engineering succeeded in developing the Phase-Convertible, Adapting and non-REusable (P-CARE) needle with variable stiffness that can improve patient health and ensure the safety of medical staff through convergent joint research with another team led by Professor Won-Il Jeong of the Graduate School of Medical Sciences. The new technology is expected to allow patients to move without worrying about pain at the injection site as it reduces the risk of damage to the wall of the blood vessel as patients receive IV medication. This is possible with the needle’s stiffness-tunable characteristics which will make it soft and flexible upon insertion into the body due to increased temperature, adapting to the movement of thin-walled vein. It is also expected to prevent blood-borne disease infections caused by accidental needlestick injuries or unethical re-using of syringes as the deformed needle remains perpetually soft even after it is retracted from the injection site. The results of this research, in which Karen-Christian Agno, a doctoral researcher of the School of Electrical Engineering at and Dr. Keungmo Yang of the Graduate School of Medical Sciences participated as co-first authors, was published in Nature Biomedical Engineering on October 30. (Paper title: A temperature-responsive intravenous needle that irreversibly softens on insertion) < Figure 1. Disposable variable stiffness intravenous needle. (a) Conceptual illustration of the key features of the P-CARE needle whose mechanical properties can be changed by body temperature, (b) Photograph of commonly used IV access devices and the P-CARE needle, (c) Performance of common IV access devices and the P-CARE needle > “We’ve developed this special needle using advanced materials and micro/nano engineering techniques, and it can solve many global problems related to conventional medical needles used in healthcare worldwide”, said Jae-Woong Jeong, Ph.D., an associate professor of Electrical Engineering at KAIST and a lead senior author of the study. The softening IV needle created by the research team is made up of liquid metal gallium that forms the hollow, mechanical needle frame encapsulated within an ultra-soft silicone material. In its solid state, gallium has sufficient hardness that enables puncturing of soft biological tissues. However, gallium melts when it is exposed to body temperature upon insertion, and changes it into a soft state like the surrounding tissue, enabling stable delivery of the drug without damaging blood vessels. Once used, a needle remains soft even at room temperature due to the supercooling phenomenon of gallium, fundamentally preventing needlestick accidents and reuse problems. Biocompatibility of the softening IV needle was validated through in vivo studies in mice. The studies showed that implanted needles caused significantly less inflammation relative to the standard IV access devices of similar size made of metal needles or plastic catheters. The study also confirmed the new needle was able to deliver medications as reliably as commercial injection needles. < Photo 1. Photo of the P-CARE needle that softens with body temperature. > Researchers also showed possibility of integrating a customized ultra-thin temperature sensor with the softening IV needle to measure the on-site temperature which can further enhance patient’s well-being. The single assembly of sensor-needle device can be used to monitor the core body temperature, or even detect if there is a fluid leakage on-site during indwelling use, eliminating the need for additional medical tools or procedures to provide the patients with better health care services. The researchers believe that this transformative IV needle can open new opportunities for wide range of applications particularly in clinical setups, in terms of redesigning other medical needles and sharp medical tools to reduce muscle tissue injury during indwelling use. The softening IV needle may become even more valuable in the present times as there is an estimated 16 billion medical injections administered annually in a global scale, yet not all needles are disposed of properly, based on a 2018 WHO report. < Figure 2. Biocompatibility test for P-CARE needle: Images of H&E stained histology (the area inside the dashed box on the left is provided in an expanded view in the right), TUNEL staining (green), DAPI staining of nuclei (blue) and co-staining (TUNEL and DAPI) of muscle tissue from different organs. > < Figure 3. Conceptual images of potential utilization for temperature monitoring function of P-CARE needle integrated with a temperature sensor. > (a) Schematic diagram of injecting a drug through intravenous injection into the abdomen of a laboratory mouse (b) Change of body temperature upon injection of drug (c) Conceptual illustration of normal intravenous drug injection (top) and fluid leakage (bottom) (d) Comparison of body temperature during normal drug injection and fluid leakage: when the fluid leak occur due to incorrect insertion, a sudden drop of temperature is detected. This work was supported by grants from the National Research Foundation of Korea (NRF) funded by the Ministry of Science and ICT.

-

KAIST proposes alternatives to chemical factories ..

- A computer simulation program “iBridge” was developed at KAIST that can put together microbial cell factories quickly and efficiently to produce cosmetics and food additives, and raw materials for nylons - Eco-friendly and sustainable fermentation process to establish an alternative to chemical plants As climate change and environmental concerns intensify, sustainable microbial cell factories garner significant attention as candidates to replace chemical plants. To develop microorganisms to be used in the microbial cell factories, it is crucial to modify their metabolic processes to induce efficient target chemical production by modulating its gene expressions. Yet, the challenge persists in determining which gene expressions to amplify and suppress, and the experimental verification of these modification targets is a time- and resource-intensive process even for experts. The challenges were addressed by a team of researchers at KAIST (President Kwang-Hyung Lee) led by Distinguished Professor Sang Yup Lee. It was announced on the 9th by the school that a method for building a microbial factory at low cost, quickly and efficiently, was presented by a novel computer simulation program developed by the team under Professor Lee’s guidance, which is named “iBridge”. This innovative system is designed to predict gene targets to either overexpress or downregulate in the goal of producing a desired compound to enable the cost-effective and efficient construction of microbial cell factories specifically tailored for producing the chemical compound in demand from renewable biomass. Systems metabolic engineering is a field of research and engineering pioneered by KAIST’s Distinguished Professor Sang Yup Lee that seeks to produce valuable compounds in industrial demands using microorganisms that are re-configured by a combination of methods including, but not limited to, metabolic engineering, synthetic biology, systems biology, and fermentation engineering. In order to improve microorganisms’ capability to produce useful compounds, it is essential to delete, suppress, or overexpress microbial genes. However, it is difficult even for the experts to identify the gene targets to modify without experimental confirmations for each of them, which can take up immeasurable amount of time and resources. The newly developed iBridge identifies positive and negative metabolites within cells, which exert positive and/or negative impact on formation of the products, by calculating the sum of covariances of their outgoing (consuming) reaction fluxes for a target chemical. Subsequently, it pinpoints "bridge" reactions responsible for converting negative metabolites into positive ones as candidates for overexpression, while identifying the opposites as targets for downregulation. The research team successfully utilized the iBridge simulation to establish E. coli microbial cell factories each capable of producing three of the compounds that are in high demands at a production capacity that has not been reported around the world. They developed E. coli strains that can each produce panthenol, a moisturizing agent found in many cosmetics, putrescine, which is one of the key components in nylon production, and 4-hydroxyphenyllactic acid, an anti-bacterial food additive. In addition to these three compounds, the study presents predictions for overexpression and suppression genes to construct microbial factories for 298 other industrially valuable compounds. Dr. Youngjoon Lee, the co-first author of this paper from KAIST, emphasized the accelerated construction of various microbial factories the newly developed simulation enabled. He stated, "With the use of this simulation, multiple microbial cell factories have been established significantly faster than it would have been using the conventional methods. Microbial cell factories producing a wider range of valuable compounds can now be constructed quickly using this technology." Professor Sang Yup Lee said, "Systems metabolic engineering is a crucial technology for addressing the current climate change issues." He added, "This simulation could significantly expedite the transition from resorting to conventional chemical factories to utilizing environmentally friendly microbial factories." < Figure. Conceptual diagram of the flow of iBridge simulation > The team’s work on iBridge is described in a paper titled "Genome-Wide Identification of Overexpression and Downregulation Gene Targets Based on the Sum of Covariances of the Outgoing Reaction Fluxes" written by Dr. Won Jun Kim, and Dr. Youngjoon Lee of the Bioprocess Research Center and Professors Hyun Uk Kim and Sang Yup Lee of the Department of Chemical and Biomolecular Engineering of KAIST. The paper was published via peer-review on the 6th of November on “Cell Systems” by Cell Press. This research was conducted with the support from the Development of Platform Technologies of Microbial Cell Factories for the Next-generation Biorefineries Project (Project Leader: Distinguished Professor Sang Yup Lee, KAIST) and Development of Platform Technology for the Production of Novel Aromatic Bioplastic using Microbial Cell Factories Project (Project Leader: Research Professor So Young Choi, KAIST) of the Korean Ministry of Science and ICT.

-

Professor Joseph J. Lim of KAIST receives the Best..

- Professor Joseph J. Lim from the Kim Jaechul Graduate School of AI at KAIST and his team receive an award for the most outstanding paper in the implementation of robot systems. - Professor Lim works on AI-based perception, reasoning, and sequential decision-making to develop systems capable of intelligent decision-making, including robot learning < Photo 1. RSS2023 Best System Paper Award Presentation > The team of Professor Joseph J. Lim from the Kim Jaechul Graduate School of AI at KAIST has been honored with the 'Best System Paper Award' at "Robotics: Science and Systems (RSS) 2023". The RSS conference is globally recognized as a leading event for showcasing the latest discoveries and advancements in the field of robotics. It is a venue where the greatest minds in robotics engineering and robot learning come together to share their research breakthroughs. The RSS Best System Paper Award is a prestigious honor granted to a paper that excels in presenting real-world robot system implementation and experimental results. < Photo 2. Professor Joseph J. Lim of Kim Jaechul Graduate School of AI at KAIST > The team led by Professor Lim, including two Master's students and an alumnus (soon to be appointed at Yonsei University), received the prestigious RSS Best System Paper Award, making it the first-ever achievement for a Korean and for a domestic institution. < Photo 3. Certificate of the Best System Paper Award presented at RSS 2023 > This award is especially meaningful considering the broader challenges in the field. Although recent progress in artificial intelligence and deep learning algorithms has resulted in numerous breakthroughs in robotics, most of these achievements have been confined to relatively simple and short tasks, like walking or pick-and-place. Moreover, tasks are typically performed in simulated environments rather than dealing with more complex, long-horizon real-world tasks such as factory operations or household chores. These limitations primarily stem from the considerable challenge of acquiring data required to develop and validate learning-based AI techniques, particularly in real-world complex tasks. In light of these challenges, this paper introduced a benchmark that employs 3D printing to simplify the reproduction of furniture assembly tasks in real-world environments. Furthermore, it proposed a standard benchmark for the development and comparison of algorithms for complex and long-horizon tasks, supported by teleoperation data. Ultimately, the paper suggests a new research direction of addressing complex and long-horizon tasks and encourages diverse advancements in research by facilitating reproducible experiments in real-world environments. Professor Lim underscored the growing potential for integrating robots into daily life, driven by an aging population and an increase in single-person households. As robots become part of everyday life, testing their performance in real-world scenarios becomes increasingly crucial. He hoped this research would serve as a cornerstone for future studies in this field. The Master's students, Minho Heo and Doohyun Lee, from the Kim Jaechul Graduate School of AI at KAIST, also shared their aspirations to become global researchers in the domain of robot learning. Meanwhile, the alumnus of Professor Lim's research lab, Dr. Youngwoon Lee, is set to be appointed to the Graduate School of AI at Yonsei University and will continue pursuing research in robot learning. Paper title: Furniture Bench: Reproducible Real-World Benchmark for Long-Horizon Complex Manipulation. Robotics: Science and Systems. < Image. Conceptual Summary of the 3D Printing Technology >

-

KAIST researchers find sleep delays more prevalent..

Sleep has a huge impact on health, well-being and productivity, but how long and how well people sleep these days has not been accurately reported. Previous research on how much and how well we sleep has mostly relied on self-reports or was confined within the data from the unnatural environments of the sleep laboratories. So, the questions remained: Is the amount and quality of sleep purely a personal choice? Could they be independent from social factors such as culture and geography? < From left to right, Sungkyu Park of Kangwon National University, South Korea; Assem Zhunis of KAIST and IBS, South Korea; Marios Constantinides of Nokia Bell Labs, UK; Luca Maria Aiello of the IT University of Copenhagen, Denmark; Daniele Quercia of Nokia Bell Labs and King's College London, UK; and Meeyoung Cha of IBS and KAIST, South Korea > A new study led by researchers at Korea Advanced Institute of Science and Technology (KAIST) and Nokia Bell Labs in the United Kingdom investigated the cultural and individual factors that influence sleep. In contrast to previous studies that relied on surveys or controlled experiments at labs, the team used commercially available smartwatches for extensive data collection, analyzing 52 million logs collected over a four-year period from 30,082 individuals in 11 countries. These people wore Nokia smartwatches, which allowed the team to investigate country-specific sleep patterns based on the digital logs from the devices. < Figure comparing survey and smartwatch logs on average sleep-time, wake-time, and sleep durations. Digital logs consistently recorded delayed hours of wake- and sleep-time, resulting in shorter sleep durations. > Digital logs collected from the smartwatches revealed discrepancies in wake-up times and sleep-times, sometimes by tens of minutes to an hour, from the data previously collected from self-report assessments. The average sleep-time overall was calculated to be around midnight, and the average wake-up time was 7:42 AM. The team discovered, however, that individuals' sleep is heavily linked to their geographical location and cultural factors. While wake-up times were similar, sleep-time varied by country. Individuals in higher GDP countries had more records of delayed bedtime. Those in collectivist culture, compared to individualist culture, also showed more records of delayed bedtime. Among the studied countries, Japan had the shortest total sleep duration, averaging a duration of under 7 hours, while Finland had the longest, averaging 8 hours. Researchers calculated essential sleep metrics used in clinical studies, such as sleep efficiency, sleep duration, and overslept hours on weekends, to analyze the extensive sleep patterns. Using Principal Component Analysis (PCA), they further condensed these metrics into two major sleep dimensions representing sleep quality and quantity. A cross-country comparison revealed that societal factors account for 55% of the variation in sleep quality and 63% of the variation in sleep quantity. Countries with a higher individualism index (IDV), which placed greater emphasis on individual achievements and relationships, had significantly longer sleep durations, which could be attributed to such societies having a norm of going to bed early. Spain and Japan, on the other hand, had the bedtime scheduled at the latest hours despite having the highest collectivism scores (low IDV). The study also discovered a moderate relationship between a higher uncertainty avoidance index (UAI), which measures implementation of general laws and regulation in daily lives of regular citizens, and better sleep quality. Researchers also investigated how physical activity can affect sleep quantity and quality to see if individuals can counterbalance cultural influences through personal interventions. They discovered that increasing daily activity can improve sleep quality in terms of shortened time needed in falling asleep and waking up. Individuals who exercise more, however, did not sleep longer. The effect of exercise differed by country, with more pronounced effects observed in some countries, such as the United States and Finland. Interestingly, in Japan, no obvious effect of exercise could be observed. These findings suggest that the relationship between daily activity and sleep may differ by country and that different exercise regimens may be more effective in different cultures. This research published on the Scientific Reports by the international journal, Nature, sheds light on the influence of social factors on sleep. (Paper Title "Social dimensions impact individual sleep quantity and quality" Article number: 9681) One of the co-authors, Daniele Quercia, commented: “Excessive work schedules, long working hours, and late bedtime in high-income countries and social engagement due to high collectivism may cause bedtimes to be delayed.” Commenting on the research, the first author Shaun Sungkyu Park said, "While it is intriguing to see that a society can play a role in determining the quantity and quality of an individual's sleep with large-scale data, the significance of this study is that it quantitatively shows that even within the same culture (country), individual efforts such as daily exercise can have a positive impact on sleep quantity and quality." "Sleep not only has a great impact on one’s well-being but it is also known to be associated with health issues such as obesity and dementia," said the lead author, Meeyoung Cha. "In order to ensure adequate sleep and improve sleep quality in an aging society, not only individual efforts but also a social support must be provided to work together," she said. The research team will contribute to the development of the high-tech sleep industry by making a code that easily calculates the sleep indicators developed in this study available free of charge, as well as providing the benchmark data for various types of sleep research to follow.

-

KAIST Civil Engineering Students named Runner-up a..

A team of five students from the Korea Advanced Institute of Science and Technology (KAIST) were awarded second place in a premier urban design student competition hosted by the Urban Land Institute and Hines, 2023 ULI Hines Student Competition - Asia Pacific. The competition, which was held for the first time in the Asia-Pacific region, is an internationally recognized event which typically attract hundreds of applicants. Jonah Remigio, Sojung Noh, Estefania Rodriguez, Jihyun Kang, and Ayantu Teshome, who joined forces under the name of “Team Hashtag Development”, were supported by faculty advisors Dr. Albert Han and Dr. Youngchul Kim of the Department of Civil and Environmental Engineering to imagine a more sustainable and enriched way of living in the Jurong district of Singapore. Their submission, titled “Proposal: The Nest”, analyzed the big data within Singapore, using the data to determine which real estate business strategies would best enhance the quality of living and economy of the region. Their final design, "The Nest" utilized mixed-use zoning to integrate the site’s scenic waterfront with homes, medical innovation, and sustainable technology, altogether creating a place to innovate, inhabit, and immerse. < The Nest by Team Hashtag Development (Jonah Remigio, Ayantu Teshome Mossisa, Estefania Ayelen Rodriguez del Puerto, Sojung Noh, Jihyun Kang) ©2023 Urban Land Institute > Ultimately, the team was recognized for their hard work and determination, imprinting South Korea’s indelible footprint in the arena of international scholastic achievement as they were named to be one of the Finalists on April 13th. < Members of Team Hashtag Development > Team Hashtag Development gave a virtual presentation to a jury of six ULI members on April 20th along with the "Team The REAL" from the University of Economics Ho Chi Minh City of Vietnam and "Team Omusubi" from the Waseda University of Japan, the team that submitted the proposal "Jurong Urban Health Campus" which was announced to be the winner on the 31st of May, after the virtual briefing by the top three finalists.