Kaist

Korean

Research News

College of Engineering News

-

Interactive Map of Metabolical Synthesis of Chemic..

An interactive map that compiled the chemicals produced by biological, chemical and combined reactions has been distributed on the web - A team led by Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering, organized and distributed an all-inclusive listing of chemical substances that can be synthesized using microorganisms - It is expected to be used by researchers around the world as it enables easy assessment of the synthetic pathway through the web. A research team comprised of Woo Dae Jang, Gi Bae Kim, and Distinguished Professor Sang Yup Lee of the Department of Chemical and Biomolecular Engineering at KAIST reported an interactive metabolic map of bio-based chemicals. Their research paper “An interactive metabolic map of bio-based chemicals” was published online in Trends in Biotechnology on August 10, 2022. As a response to rapid climate change and environmental pollution, research on the production of petrochemical products using microorganisms is receiving attention as a sustainable alternative to existing methods of productions. In order to synthesize various chemical substances, materials, and fuel using microorganisms, it is necessary to first construct the biosynthetic pathway toward desired product by exploration and discovery and introduce them into microorganisms. In addition, in order to efficiently synthesize various chemical substances, it is sometimes necessary to employ chemical methods along with bioengineering methods using microorganisms at the same time. For the production of non-native chemicals, novel pathways are designed by recruiting enzymes from heterologous sources or employing enzymes designed though rational engineering, directed evolution, or ab initio design. The research team had completed a map of chemicals which compiled all available pathways of biological and/or chemical reactions that lead to the production of various bio-based chemicals back in 2019 and published the map in Nature Catalysis. The map was distributed in the form of a poster to industries and academia so that the synthesis paths of bio-based chemicals could be checked at a glance. The research team has expanded the bio-based chemicals map this time in the form of an interactive map on the web so that anyone with internet access can quickly explore efficient paths to synthesize desired products. The web-based map provides interactive visual tools to allow interactive visualization, exploration, and analysis of complex networks of biological and/or chemical reactions toward the desired products. In addition, the reported paper also discusses the production of natural compounds that are used for diverse purposes such as food and medicine, which will help designing novel pathways through similar approaches or by exploiting the promiscuity of enzymes described in the map. The published bio-based chemicals map is also available at http://systemsbiotech.co.kr. The co-first authors, Dr. Woo Dae Jang and Ph.D. student Gi Bae Kim, said, “We conducted this study to address the demand for updating the previously distributed chemicals map and enhancing its versatility.” “The map is expected to be utilized in a variety of research and in efforts to set strategies and prospects for chemical production incorporating bio and chemical methods that are detailed in the map.” Distinguished Professor Sang Yup Lee said, “The interactive bio-based chemicals map is expected to help design and optimization of the metabolic pathways for the biosynthesis of target chemicals together with the strategies of chemical conversions, serving as a blueprint for developing further ideas on the production of desired chemicals through biological and/or chemical reactions.” The interactive metabolic map of bio-based chemicals.

-

PICASSO Technique Drives Biological Molecules into..

The new imaging approach brings current imaging colors from four to more than 15 for mapping overlapping proteins Pablo Picasso’s surreal cubist artistic style shifted common features into unrecognizable scenes, but a new imaging approach bearing his namesake may elucidate the most complicated subject: the brain. Employing artificial intelligence to clarify spectral color blending of tiny molecules used to stain specific proteins and other items of research interest, the PICASSO technique, allows researchers to use more than 15 colors to image and parse our overlapping proteins. The PICASSO developers, based in Korea, published their approach on May 5 in Nature Communications. < 45-color multiplexed imaging of the mouse hippocampus via PICASSO in three staining and imaging rounds (Nature Communications/KAIST) > Fluorophores — the staining molecules — emit specific colors when excited by a light, but if more than four fluorophores are used, their emitted colors overlap and blend. Researchers previously developed techniques to correct this spectral overlap by precisely defining the matrix of mixed and unmixed images. This measurement depends on reference spectra, found by identifying clear images of only one fluorophore-stained specimen or of multiple, identically prepared specimens that only contain a single fluorophore each. “Such reference spectra measurement could be complicated to perform in highly heterogeneous specimens, such as the brain, due to the highly varied emission spectra of fluorophores depending on the subregions from which the spectra were measured,” said co-corresponding author Young-Gyu Yoon, professor in the School of Electrical Engineering at KAIST. He explained that the subregions would each need their own spectra reference measurements, making for an inefficient, time-consuming process. “To address this problem, we developed an approach that does not require reference spectra measurements.” The approach is the “Process of ultra-multiplexed Imaging of biomolecules viA the unmixing of the Signals of Spectrally Overlapping fluorophores,” also known as PICASSO. Ultra-multiplexed imaging refers to visualizing the numerous individual components of a unit. Like a cinema multiplex in which each theater plays a different movie, each protein in a cell has a different role. By staining with fluorophores, researchers can begin to understand those roles. “We devised a strategy based on information theory; unmixing is performed by iteratively minimizing the mutual information between mixed images,” said co-corresponding author Jae-Byum Chang, professor in the Department of Materials Science and Engineering, KAIST. “This allows us to get away with the assumption that the spatial distribution of different proteins is mutually exclusive and enables accurate information unmixing.” To demonstrate PICASSO’s capabilities, the researchers applied the technique to imaging a mouse brain. With a single round of staining, they performed 15-color multiplexed imaging of a mouse brain. Although small, mouse brains are still complex, multifaceted organs that can take significant resources to map. According to the researchers, PICASSO can improve the capabilities of other imaging techniques and allow for the use of even more fluorophore colors. Using one such imaging technique in combination with PICASSO, the team achieved 45-color multiplexed imaging of the mouse brain in only three staining and imaging cycles, according to Yoon. “PICASSO is a versatile tool for the multiplexed biomolecule imaging of cultured cells, tissue slices and clinical specimens,” Chang said. “We anticipate that PICASSO will be useful for a broad range of applications for which biomolecules’ spatial information is important. One such application the tool would be useful for is revealing the cellular heterogeneities of tumor microenvironments, especially the heterogeneous populations of immune cells, which are closely related to cancer prognoses and the efficacy of cancer therapies.” The Samsung Research Funding & Incubation Center for Future Technology supported this work. Spectral imaging was performed at the Korea Basic Science Institute Western Seoul Center. -Publication Junyoung Seo, Yeonbo Sim, Jeewon Kim, Hyunwoo Kim, In Cho, Hoyeon Nam, Yong-Gyu Yoon, Jae-Byum Chang, “PICASSO allows ultra-multiplexed fluorescence imaging of spatially overlapping proteins without reference spectra measurements,” May 5, Nature Communications (doi.org/10.1038/s41467-022-30168-z) -Profile Professor Jae-Byum Chang Department of Materials Science and Engineering College of Engineering KAIST Professor Young-Gyu Yoon School of Electrical Engineering College of Engineering KAIST

-

Now You Can See Floral Scents!

Optical interferometry visualizes how often lilies emit volatile organic compounds < Image: Direct measurement of floral scents from a live lily using a laser interferometry method. The signal of the refractive index difference was monitored and the VOCs’ emission frequency results were calculated from Fast Fourier Transform. It showed the unsteady VOC emissions. > Have you ever thought about when flowers emit their scents? KAIST mechanical engineers and biological scientists directly visualized how often a lily releases a floral scent using a laser interferometry method. These measurement results can provide new insights for understanding and further exploring the biosynthesis and emission mechanisms of floral volatiles. Why is it important to know this? It is well known that the fragrance of flowers affects their interactions with pollinators, microorganisms, and florivores. For instance, many flowering plants can tune their scent emission rates when pollinators are active for pollination. Petunias and the wild tobacco Nicotiana attenuata emit floral scents at night to attract night-active pollinators. Thus, visualizing scent emissions can help us understand the ecological evolution of plant-pollinator interactions. Many groups have been trying to develop methods for scent analysis. Mass spectrometry has been one widely used method for investigating the fragrance of flowers. Although mass spectrometry reveals the quality and quantity of floral scents, it is impossible to directly measure the releasing frequency. A laser-based gas detection system and a smartphone-based detection system using chemo-responsive dyes have also been used to measure volatile organic compounds (VOCs) in real-time, but it is still hard to measure the time-dependent emission rate of floral scents. However, the KAIST research team co-led by Professor Hyoungsoo Kim from the Department of Mechanical Engineering and Professor Sang-Gyu Kim from the Department of Biological Sciences measured a refractive index difference between the vapor of the VOCs of lilies and the air to measure the emission frequency. The floral scent vapor was detected and the refractive index of air was 1.0 while that of the major floral scent of a linalool lily was 1.46. Professor Hyoungsoo Kim said, “We expect this technology to be further applicable to various industrial sectors such as developing it to detect hazardous substances in a space.” The research team also plans to identify the DNA mechanism that controls floral scent secretion. The current work entitled “Real-time visualization of scent accumulation reveals the frequency of floral scent emissions” was published in ‘Frontiers in Plant Science’ on April 18, 2022. (https://doi.org/10.3389/fpls.2022.835305). This research was supported by the Basic Science Research Program through the National Research Foundation of Korea (NRF-2021R1A2C2007835), the Rural Development Administration (PJ016403), and the KAIST-funded Global Singularity Research PREP-Program. -Publication: H. Kim, G. Lee, J. Song, and S.-G. Kim, "Real-time visualization of scent accumulation reveals the frequency of floral scent emissions," Frontiers in Plant Science 18, 835305 (2022) (https://doi.org/10.3389/fpls.2022.835305) -Profile: Professor Hyoungsoo Kim http://fil.kaist.ac.kr @MadeInH on Twitter Department of Mechanical Engineering KAIST Professor Sang-Gyu Kim https://sites.google.com/view/kimlab/home Department of Biological Sciences KAIST

-

A New Strategy for Active Metasurface Design Provi..

The new strategy displays an unprecedented upper limit of dynamic phase modulation with no significant variations in optical amplitude An international team of researchers led by Professor Min Seok Jang of KAIST and Professor Victor W. Brar of the University of Wisconsin-Madison has demonstrated a widely applicable methodology enabling a full 360° active phase modulation for metasurfaces while maintaining significant levels of uniform light amplitude. This strategy can be fundamentally applied to any spectral region with any structures and resonances that fit the bill. Metasurfaces are optical components with specialized functionalities indispensable for real-life applications ranging from LIDAR and spectroscopy to futuristic technologies such as invisibility cloaks and holograms. They are known for their compact and micro/nano-sized nature, which enables them to be integrated into electronic computerized systems with sizes that are ever decreasing as predicted by Moore’s law. < Figure1.The metasurface designed by the team that demonstrates complete 2π tunable phase modulation utilizing the avoided crossing of two resonances. > < Figure2. a: Complex reflection coefficient trajectories with different mobility values for the graphene sheet case. Full 2π phase modulation does not occur without the avoided crossing with graphene plasmons, despite the increasing mobilities and therefore the decreasing linewidths. b: Complex reflection coefficient trajectories with different mobility values for the graphene ribbon case.) > In order to allow for such innovations, metasurfaces must be capable of manipulating the impinging light, doing so by manipulating either the light’s amplitude or phase (or both) and emitting it back out. However, dynamically modulating the phase with the full circle range has been a notoriously difficult task, with very few works managing to do so by sacrificing a substantial amount of amplitude control. Challenged by these limitations, the team proposed a general methodology that enables metasurfaces to implement a dynamic phase modulation with the complete 360° phase range, all the while uniformly maintaining significant levels of amplitude. The underlying reason for the difficulty achieving such a feat is that there is a fundamental trade-off regarding dynamically controlling the optical phase of light. Metasurfaces generally perform such a function through optical resonances, an excitation of electrons inside the metasurface structure that harmonically oscillate together with the incident light. In order to be able to modulate through the entire range of 0-360°, the optical resonance frequency (the center of the spectrum) must be tuned by a large amount while the linewidth (the width of the spectrum) is kept to a minimum. However, to electrically tune the optical resonance frequency of the metasurface on demand, there needs to be a controllable influx and outflux of electrons into the metasurface and this inevitably leads to a larger linewidth of the aforementioned optical resonance. The problem is further compounded by the fact that the phase and the amplitude of optical resonances are closely correlated in a complex, non-linear fashion, making it very difficult to hold substantial control over the amplitude while changing the phase. The team’s work circumvented both problems by using two optical resonances, each with specifically designated properties. One resonance provides the decoupling between the phase and amplitude so that the phase is able to be tuned while significant and uniform levels of amplitude are maintained, as well as providing a narrow linewidth. The other resonance provides the capability of being sufficiently tuned to a large degree so that the complete full circle range of phase modulation is achievable. The quintessence of the work is then to combine the different properties of the two resonances through a phenomenon called avoided crossing, so that the interactions between the two resonances lead to an amalgamation of the desired traits that achieves and even surpasses the full 360° phase modulation with uniform amplitude. Professor Jang said, “Our research proposes a new methodology in dynamic phase modulation that breaks through the conventional limits and trade-offs, while being broadly applicable in diverse types of metasurfaces. We hope that this idea helps researchers implement and realize many key applications of metasurfaces, such as LIDAR and holograms, so that the nanophotonics industry keeps growing and provides a brighter technological future.” The research paper authored by Ju Young Kim and Juho Park, et al., and titled "Full 2π Tunable Phase Modulation Using Avoided Crossing of Resonances" was published in Nature Communications on April 19. The research was funded by the Samsung Research Funding & Incubation Center of Samsung Electronics. -Publication: Ju Young Kim, Juho Park, Gregory R. Holdman, Jacob T. Heiden, Shinho Kim, Victor W. Brar, and Min Seok Jang, “Full 2π Tunable Phase Modulation Using Avoided Crossing of Resonances” Nature Communications on April 19 (2022). doi.org/10.1038/s41467-022-29721-7 -Profile Professor Min Seok Jang School of Electrical Engineering KAIST

-

LightPC Presents a Resilient System Using Only Non..

Lightweight Persistence Centric System (LightPC) ensures both data and execution persistence for energy-efficient full system persistence A KAIST research team has developed hardware and software technology that ensures both data and execution persistence. The Lightweight Persistence Centric System (LightPC) makes the systems resilient against power failures by utilizing only non-volatile memory as the main memory. “We mounted non-volatile memory on a system board prototype and created an operating system to verify the effectiveness of LightPC,” said Professor Myoungsoo Jung. The team confirmed that LightPC validated its execution while powering up and down in the middle of execution, showing up to eight times more memory, 4.3 times faster application execution, and 73% lower power consumption compared to traditional systems. Professor Jung said that LightPC can be utilized in a variety of fields such as data centers and high-performance computing to provide large-capacity memory, high performance, low power consumption, and service reliability. In general, power failures on legacy systems can lead to the loss of data stored in the DRAM-based main memory. Unlike volatile memory such as DRAM, non-volatile memory can retain its data without power. Although non-volatile memory has the characteristics of lower power consumption and larger capacity than DRAM, non-volatile memory is typically used for the task of secondary storage due to its lower write performance. For this reason, nonvolatile memory is often used with DRAM. However, modern systems employing non-volatile memory-based main memory experience unexpected performance degradation due to the complicated memory microarchitecture. To enable both data and execution persistent in legacy systems, it is necessary to transfer the data from the volatile memory to the non-volatile memory. Checkpointing is one possible solution. It periodically transfers the data in preparation for a sudden power failure. While this technology is essential for ensuring high mobility and reliability for users, checkpointing also has fatal drawbacks. It takes additional time and power to move data and requires a data recovery process as well as restarting the system. In order to address these issues, the research team developed a processor and memory controller to raise the performance of non-volatile memory-only memory. LightPC matches the performance of DRAM by minimizing the internal volatile memory components from non-volatile memory, exposing the non-volatile memory (PRAM) media to the host, and increasing parallelism to service on-the-fly requests as soon as possible. The team also presented operating system technology that quickly makes execution states of running processes persistent without the need for a checkpointing process. The operating system prevents all modifications to execution states and data by keeping all program executions idle before transferring data in order to support consistency within a period much shorter than the standard power hold-up time of about 16 minutes. For consistency, when the power is recovered, the computer almost immediately revives itself and re-executes all the offline processes immediately without the need for a boot process. The researchers will present their work (LightPC: Hardware and Software Co-Design for Energy-Efficient Full System Persistence) at the International Symposium on Computer Architecture (ISCA) 2022 in New York in June. More information is available at the CAMELab website (http://camelab.org). < Figure 1. Customized system prototype (left: System prototype, Right: Implementation view) > < Figure 2. Overview of the proposed LightPC > < Figure 3. Energy and performance of the LightPC/LegacyPC (Right: Cycle norm. to legacy PC) > -Profile: Professor Myoungsoo Jung Computer Architecture and Memory Systems Laboratory (CAMEL) http://camelab.org School of Electrical Engineering KAIST

-

Energy-Efficient AI Hardware Technology Via a Brai..

Researchers demonstrate neuromodulation-inspired stashing system for the energy-efficient learning of a spiking neural network using a self-rectifying memristor array < Image: A schematic illustrating the localized brain activity (a-c) and the configuration of the hardware and software hybrid neural network (d-e) using a self-rectifying memristor array (f-g). > Researchers have proposed a novel system inspired by the neuromodulation of the brain, referred to as a ‘stashing system,’ that requires less energy consumption. The research group led by Professor Kyung Min Kim from the Department of Materials Science and Engineering has developed a technology that can efficiently handle mathematical operations for artificial intelligence by imitating the continuous changes in the topology of the neural network according to the situation. The human brain changes its neural topology in real time, learning to store or recall memories as needed. The research group presented a new artificial intelligence learning method that directly implements these neural coordination circuit configurations. Research on artificial intelligence is becoming very active, and the development of artificial intelligence-based electronic devices and product releases are accelerating, especially in the Fourth Industrial Revolution age. To implement artificial intelligence in electronic devices, customized hardware development should also be supported. However most electronic devices for artificial intelligence require high power consumption and highly integrated memory arrays for large-scale tasks. It has been challenging to solve these power consumption and integration limitations, and efforts have been made to find out how the human brain solves problems. To prove the efficiency of the developed technology, the research group created artificial neural network hardware equipped with a self-rectifying synaptic array and algorithm called a ‘stashing system’ that was developed to conduct artificial intelligence learning. As a result, it was able to reduce energy by 37% within the stashing system without any accuracy degradation. This result proves that emulating the neuromodulation in humans is possible. Professor Kim said, "In this study, we implemented the learning method of the human brain with only a simple circuit composition and through this we were able to reduce the energy needed by nearly 40 percent.” This neuromodulation-inspired stashing system that mimics the brain’s neural activity is compatible with existing electronic devices and commercialized semiconductor hardware. It is expected to be used in the design of next-generation semiconductor chips for artificial intelligence. This study was published in Advanced Functional Materials in March 2022 and supported by KAIST, the National Research Foundation of Korea, the National NanoFab Center, and SK Hynix. -Publication: Woon Hyung Cheong, Jae Bum Jeon†, Jae Hyun In, Geunyoung Kim, Hanchan Song, Janho An, Juseong Park, Young Seok Kim, Cheol Seong Hwang, and Kyung Min Kim (2022) “Demonstration of Neuromodulation-inspired Stashing System for Energy-efficient Learning of Spiking Neural Network using a Self-Rectifying Memristor Array,” Advanced Functional Materials March 31, 2022 (DOI: 10.1002/adfm.202200337) -Profile: Professor Kyung Min Kim http://semi.kaist.ac.kr https://scholar.google.com/citations?user=BGw8yDYAAAAJ&hl=ko Department of Materials Science and Engineering KAIST

-

Machine Learning-Based Algorithm to Speed up DNA S..

The algorithm presents the first full-fledged, short-read alignment software that leverages learned indices for solving the exact match search problem for efficient seeding < Image:Scientists from KAIST develops new machine-learning-based approach to speed up DNA sequencing. > The human genome consists of a complete set of DNA, which is about 6.4 billion letters long. Because of its size, reading the whole genome sequence at once is challenging. So scientists use DNA sequencers to produce hundreds of millions of DNA sequence fragments, or short reads, up to 300 letters long. Then the DNA sequencer assembles all the short reads like a giant jigsaw puzzle to reconstruct the entire genome sequence. Even with very fast computers, this job can take hours to complete. A research team at KAIST has achieved up to 3.45x faster speeds by developing the first short-read alignment software that uses a recent advance in machine-learning called a learned index. The research team reported their findings on March 7, 2022 in the journal Bioinformatics. The software has been released as open source and can be found on github (https://github.com/kaist-ina/BWA-MEME). Next-generation sequencing (NGS) is a state-of-the-art DNA sequencing method. Projects are underway with the goal of producing genome sequencing at population scale. Modern NGS hardware is capable of generating billions of short reads in a single run. Then the short reads have to be aligned with the reference DNA sequence. With large-scale DNA sequencing operations running hundreds of next-generation sequences, the need for an efficient short read alignment tool has become even more critical. Accelerating the DNA sequence alignment would be a step toward achieving the goal of population-scale sequencing. However, existing algorithms are limited in their performance because of their frequent memory accesses. BWA-MEM2 is a popular short-read alignment software package currently used to sequence the DNA. However, it has its limitations. The state-of-the-art alignment has two phases – seeding and extending. During the seeding phase, searches find exact matches of short reads in the reference DNA sequence. During the extending phase, the short reads from the seeding phase are extended. In the current process, bottlenecks occur in the seeding phase. Finding the exact matches slows the process. The researchers set out to solve the problem of accelerating the DNA sequence alignment. To speed the process, they applied machine learning techniques to create an algorithmic improvement. Their algorithm, BWA-MEME (BWA-MEM emulated) leverages learned indices to solve the exact match search problem. The original software compared one character at a time for an exact match search. The team’s new algorithm achieves up to 3.45x faster speeds in seeding throughput over BWA-MEM2 by reducing the number of instructions by 4.60x and memory accesses by 8.77x. “Through this study, it has been shown that full genome big data analysis can be performed faster and less costly than conventional methods by applying machine learning technology,” said Professor Dongsu Han from the School of Electrical Engineering at KAIST. The researchers’ ultimate goal was to develop efficient software that scientists from academia and industry could use on a daily basis for analyzing big data in genomics. “With the recent advances in artificial intelligence and machine learning, we see so many opportunities for designing better software for genomic data analysis. The potential is there for accelerating existing analysis as well as enabling new types of analysis, and our goal is to develop such software,” added Han. Whole genome sequencing has traditionally been used for discovering genomic mutations and identifying the root causes of diseases, which leads to the discovery and development of new drugs and cures. There could be many potential applications. Whole genome sequencing is used not only for research, but also for clinical purposes. “The science and technology for analyzing genomic data is making rapid progress to make it more accessible for scientists and patients. This will enhance our understanding about diseases and develop a better cure for patients of various diseases.” The research was funded by the National Research Foundation of the Korean government’s Ministry of Science and ICT. -Publication Youngmok Jung, Dongsu Han, “BWA-MEME:BWA-MEM emulated with a machine learning approach,” Bioinformatics, Volume 38, Issue 9, May 2022 (https://doi.org/10.1093/bioinformatics/btac137) -Profile Professor Dongsu Han School of Electrical Engineering KAIST

-

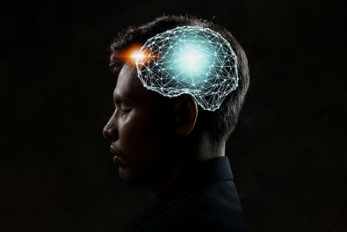

Decoding Brain Signals to Control a Robotic Arm

Advanced brain-machine interface system successfully interprets arm movement directions from neural signals in the brain < Figure:Experimental paradigm. Subjects were instructed to perform reach-and-grasp movements to designate the locations of the target in three-dimensional space. (a) Subjects A and B were provided the visual cue as a real tennis ball at one of four pseudo-randomized locations. (b) Subjects A and B were provided the visual cue as a virtual reality clip showing a sequence of five stages of a reach-and-grasp movement. > Researchers have developed a mind-reading system for decoding neural signals from the brain during arm movement. The method, described in the journal Applied Soft Computing, can be used by a person to control a robotic arm through a brain-machine interface (BMI). A BMI is a device that translates nerve signals into commands to control a machine, such as a computer or a robotic limb. There are two main techniques for monitoring neural signals in BMIs: electroencephalography (EEG) and electrocorticography (ECoG). The EEG exhibits signals from electrodes on the surface of the scalp and is widely employed because it is non-invasive, relatively cheap, safe and easy to use. However, the EEG has low spatial resolution and detects irrelevant neural signals, which makes it difficult to interpret the intentions of individuals from the EEG. On the other hand, the ECoG is an invasive method that involves placing electrodes directly on the surface of the cerebral cortex below the scalp. Compared with the EEG, the ECoG can monitor neural signals with much higher spatial resolution and less background noise. However, this technique has several drawbacks. “The ECoG is primarily used to find potential sources of epileptic seizures, meaning the electrodes are placed in different locations for different patients and may not be in the optimal regions of the brain for detecting sensory and movement signals,” explained Professor Jaeseung Jeong, a brain scientist at KAIST. “This inconsistency makes it difficult to decode brain signals to predict movements.” To overcome these problems, Professor Jeong’s team developed a new method for decoding ECoG neural signals during arm movement. The system is based on a machine-learning system for analysing and predicting neural signals called an ‘echo-state network’ and a mathematical probability model called the Gaussian distribution. In the study, the researchers recorded ECoG signals from four individuals with epilepsy while they were performing a reach-and-grasp task. Because the ECoG electrodes were placed according to the potential sources of each patient’s epileptic seizures, only 22% to 44% of the electrodes were located in the regions of the brain responsible for controlling movement. During the movement task, the participants were given visual cues, either by placing a real tennis ball in front of them, or via a virtual reality headset showing a clip of a human arm reaching forward in first-person view. They were asked to reach forward, grasp an object, then return their hand and release the object, while wearing motion sensors on their wrists and fingers. In a second task, they were instructed to imagine reaching forward without moving their arms. The researchers monitored the signals from the ECoG electrodes during real and imaginary arm movements, and tested whether the new system could predict the direction of this movement from the neural signals. They found that the novel decoder successfully classified arm movements in 24 directions in three-dimensional space, both in the real and virtual tasks, and that the results were at least five times more accurate than chance. They also used a computer simulation to show that the novel ECoG decoder could control the movements of a robotic arm. Overall, the results suggest that the new machine learning-based BCI system successfully used ECoG signals to interpret the direction of the intended movements. The next steps will be to improve the accuracy and efficiency of the decoder. In the future, it could be used in a real-time BMI device to help people with movement or sensory impairments. This research was supported by the KAIST Global Singularity Research Program of 2021, Brain Research Program of the National Research Foundation of Korea funded by the Ministry of Science, ICT, and Future Planning, and the Basic Science Research Program through the National Research Foundation of Korea funded by the Ministry of Education. -Publication Hoon-Hee Kim, Jaeseung Jeong, “An electrocorticographic decoder for arm movement for brain-machine interface using an echo state network and Gaussian readout,” Applied Soft Computing online December 31, 2021 (doi.org/10.1016/j.asoc.2021.108393) -Profile Professor Jaeseung Jeong Department of Bio and Brain Engineering College of Engineering KAIST

-

Improving speech intelligibility with Privacy-Pres..

Privacy-Preserving AR system can augment the speaker's speech with real-life subtitles to overcome the loss of contextual cues caused by mask-wearing and social distancing during the COVID-19 pandemic. Degraded speech intelligibility induces face-to-face conversation participants to speak louder and more distinctively, exposing the content to potential eavesdroppers. Similarly, people with face masks deteriorate their speech intelligibility, especially during the post-covid-19 crisis. Augmented Reality (AR) can serve as an effective tool to visualise people conversations and promote speech intelligibility, known as speech augmentation. However, visualised conversations without proper privacy management can expose AR users to privacy risks. An international research team of Prof. Lik-Hang LEE in the Department of Industrial and Systems Engineering at KAIST and Prof. Pan HUI in Computational Media and Arts at Hong Kong University of Science and Technology employed a conversation-oriented Contextual Integrity (CI) principle to develop a privacy-preserving AR framework for speech augmentation. At its core, the framework, namely Theophany, establishes ad-hoc social networks between relevant conversation participants to exchange contextual information and improve speech intelligibility in real-time. < Figure 1: A real-life subtitle application with AR headsets > Theophany has been implemented as a real-life subtitle application in AR to improve speech intelligibility in daily conversations (Figure 1). This implementation leverages a multi-modal channel, such as eye-tracking, camera, and audio. Theophany transforms the user's speech into text and estimates the intended recipients through gaze detection. The CI Enforcer module evaluates the sentences' sensitivity. If the sensitivity meets the speaker's privacy threshold, the sentence is transmitted to the appropriate recipients (Figure 2). < Figure 2: Multi-modal Contextual Integrity Channel > Based on the principles of Contextual Integrity (CI), parameters of privacy perception are designed for privacy-preserving face-to-face conversations, such as topic, location, and participants. Accordingly, Theophany operation depends on the topic and session. Figure 3 demonstrates several illustrative conversation sessions: (a) the topic is not sensitive and transmitted to everybody in the user's gaze. (b) the topic is work-sensitive and only transmitted to the coworker. (c) the topic is sensitive and only transmitted to the friend in the user's gaze. A new friend entering the user's gaze only gets the textual transcription once a new session (topic) starts (d). (e) the topic is highly sensitive, and nobody gets the textual transcription. < Figure 3: Speech Augmentation in five illustrative sessions > Theophany within a prototypical AR system augments the speaker's speech with real-life subtitles to overcome the loss of contextual cues caused by mask-wearing and social distancing during the COVID-19 pandemic. The research was published in ACM Multimedia under the title of 'Theophany: Multi-modal Speech Augmentation in Instantaneous Privacy Channels' (DOI: 10.1145/3474085.3475507), being selected as one of the best paper award candidates (Top 5). Note that the first author is an alumnus from the Industrial and Systems Engineering Department at KAIST. Short Bio: Lik-Hang Lee received a PhD degree from SyMLab, Hong Kong University of Science and Technology, and the Bachelor's and M.Phil. degrees from the University of Hong Kong. He is currently an assistant professor (tenure-track) with the Korea Advanced Institute of Science and Technology (KAIST), South Korea, and the head of the Augmented Reality and Media Laboratory, KAIST. He has built and designed various human-centric computing specializing in augmented and virtual realities (AR/VR). In recent years, he has published more than 30 research papers on AR/VR at prestigious conferences such as ACM WWW, ACM IMWUT, ACM Multimedia, ACM CSUR, IEEE Percom, and so on. He also serves the research community, as TPCs, PCs and workshop organizers, at some prestigious venues, such as AAAI, IJCAI, IEEE PERCOM, ACM CHI, ACM Multimedia, ACM IMWUT, IEEE VR, etc. Photo:

-

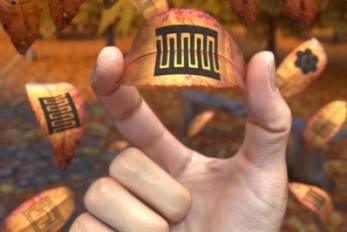

Eco-Friendly Micro-Supercapacitors Using Fallen Le..

Femtosecond micro-supercapacitors on a single leaf could easily be applied to wearable electronics, smart houses, and IoTs < Image: The schematic illustration of the production of femtosecond laser-induced graphene. > A KAIST research team has developed a graphene-inorganic-hybrid micro-supercapacitor made of leaves using femtosecond direct laser writing lithography. The advancement of wearable electronic devices is synonymous with innovations in flexible energy storage devices. Of the various energy storage devices, micro-supercapacitors have drawn a great deal of interest for their high electrical power density, long lifetimes, and short charging times. However, there has been an increase in waste battery generation with the increases in the consumption and use of electronic equipment as well as the short replacement period that follows advancements in mobile devices. The safety and environmental issues involved in the collection, recycling, and processing of such waste batteries are creating a number of challenges. Forests cover about 30 percent of the Earth’s surface, producing a huge amount of fallen leaves. This naturally occurring biomass comes in large quantities and is both biodegradable and reusable, which makes it an attractive, eco-friendly material. However, if the leaves are left neglected instead of being used efficiently, they can contribute to fires or water pollution. To solve both problems at once, a research team led by Professor Young-Jin Kim from the Department of Mechanical Engineering and Dr. Hana Yoon from the Korea Institute of Energy Research developed a one-step technology that can create porous 3D graphene micro-electrodes with high electrical conductivity without additional treatment in atmospheric conditions by irradiating femtosecond laser pulses on the surface of the leaves without additional materials. Taking this strategy further, the team also suggested a method for producing flexible micro-supercapacitors. They showed that this technique could quickly and easily produce porous graphene-inorganic-hybrid electrodes at a low price, and validated their performance by using the graphene micro-supercapacitors to power an LED and an electronic watch that could function as a thermometer, hygrometer, and timer. These results open up the possibility of the mass production of flexible and green graphene-based electronic devices. Professor Young-Jin Kim said, “Leaves create forest biomass that comes in unmanageable quantities, so using them for next-generation energy storage devices makes it possible for us to reuse waste resources, thereby establishing a virtuous cycle.” This research was published in Advanced Functional Materials last month and was sponsored by the Ministry of Agriculture Food and Rural Affairs, the Korea Forest Service, and the Korea Institute of Energy Research. -Publication Truong-Son Dinh Le, Yeong A. Lee, Han Ku Nam, Kyu Yeon Jang, Dongwook Yang, Byunggi Kim, Kanghoon Yim, Seung Woo Kim, Hana Yoon, and Young-jin Kim, “Green Flexible Graphene-Inorganic-Hybrid Micro-Supercapacitors Made of Fallen Leaves Enabled by Ultrafast Laser Pulses," December 05, 2021, Advanced Functional Materials (doi.org/10.1002/adfm.202107768) -Profile Professor Young-Jin Kim Ultra-Precision Metrology and Manufacturing (UPM2) Laboratory Department of Mechanical Engineering KAIST

-

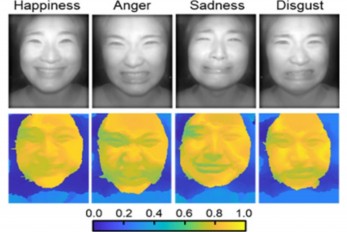

AI Light-Field Camera Reads 3D Facial Expressions

Machine-learned, light-field camera reads facial expressions from high-contrast illumination invariant 3D facial images < Image: Facial expression reading based on MLP classification from 3D depth maps and 2D images obtained by NIR-LFC > A joint research team led by Professors Ki-Hun Jeong and Doheon Lee from the KAIST Department of Bio and Brain Engineering reported the development of a technique for facial expression detection by merging near-infrared light-field camera techniques with artificial intelligence (AI) technology. Unlike a conventional camera, the light-field camera contains micro-lens arrays in front of the image sensor, which makes the camera small enough to fit into a smart phone, while allowing it to acquire the spatial and directional information of the light with a single shot. The technique has received attention as it can reconstruct images in a variety of ways including multi-views, refocusing, and 3D image acquisition, giving rise to many potential applications. However, the optical crosstalk between shadows caused by external light sources in the environment and the micro-lens has limited existing light-field cameras from being able to provide accurate image contrast and 3D reconstruction. The joint research team applied a vertical-cavity surface-emitting laser (VCSEL) in the near-IR range to stabilize the accuracy of 3D image reconstruction that previously depended on environmental light. When an external light source is shone on a face at 0-, 30-, and 60-degree angles, the light field camera reduces 54% of image reconstruction errors. Additionally, by inserting a light-absorbing layer for visible and near-IR wavelengths between the micro-lens arrays, the team could minimize optical crosstalk while increasing the image contrast by 2.1 times. Through this technique, the team could overcome the limitations of existing light-field cameras and was able to develop their NIR-based light-field camera (NIR-LFC), optimized for the 3D image reconstruction of facial expressions. Using the NIR-LFC, the team acquired high-quality 3D reconstruction images of facial expressions expressing various emotions regardless of the lighting conditions of the surrounding environment. The facial expressions in the acquired 3D images were distinguished through machine learning with an average of 85% accuracy – a statistically significant figure compared to when 2D images were used. Furthermore, by calculating the interdependency of distance information that varies with facial expression in 3D images, the team could identify the information a light-field camera utilizes to distinguish human expressions. Professor Ki-Hun Jeong said, “The sub-miniature light-field camera developed by the research team has the potential to become the new platform to quantitatively analyze the facial expressions and emotions of humans.” To highlight the significance of this research, he added, “It could be applied in various fields including mobile healthcare, field diagnosis, social cognition, and human-machine interactions.” This research was published in Advanced Intelligent Systems online on December 16, under the title, “Machine-Learned Light-field Camera that Reads Facial Expression from High-Contrast and Illumination Invariant 3D Facial Images.” This research was funded by the Ministry of Science and ICT and the Ministry of Trade, Industry and Energy. -Publication “Machine-learned light-field camera that reads fascial expression from high-contrast and illumination invariant 3D facial images,” Sang-In Bae, Sangyeon Lee, Jae-Myeong Kwon, Hyun-Kyung Kim. Kyung-Won Jang, Doheon Lee, Ki-Hun Jeong, Advanced Intelligent Systems, December 16, 2021 (doi.org/10.1002/aisy.202100182) -Profile Professor Ki-Hun Jeong Biophotonic Laboratory Department of Bio and Brain Engineering KAIST Professor Doheon Lee Department of Bio and Brain Engineering KAIST

-

Face Detection in Untrained Deep Neural Networks

A KAIST team shows that primitive visual selectivity of faces can arise spontaneously in completely untrained deep neural networks Researchers have found that higher visual cognitive functions can arise spontaneously in untrained neural networks. A KAIST research team led by Professor Se-Bum Paik from the Department of Bio and Brain Engineering has shown that visual selectivity of facial images can arise even in completely untrained deep neural networks. This new finding has provided revelatory insights into mechanisms underlying the development of cognitive functions in both biological and artificial neural networks, also making a significant impact on our understanding of the origin of early brain functions before sensory experiences. The study published in Nature Communications on December 16 demonstrates that neuronal activities selective to facial images are observed in randomly initialized deep neural networks in the complete absence of learning, and that they show the characteristics of those observed in biological brains. The ability to identify and recognize faces is a crucial function for social behavior, and this ability is thought to originate from neuronal tuning at the single or multi-neuronal level. Neurons that selectively respond to faces are observed in young animals of various species, and this raises intense debate whether face-selective neurons can arise innately in the brain or if they require visual experience. Using a model neural network that captures properties of the ventral stream of the visual cortex, the research team found that face-selectivity can emerge spontaneously from random feedforward wirings in untrained deep neural networks. The team showed that the character of this innate face-selectivity is comparable to that observed with face-selective neurons in the brain, and that this spontaneous neuronal tuning for faces enables the network to perform face detection tasks. These results imply a possible scenario in which the random feedforward connections that develop in early, untrained networks may be sufficient for initializing primitive visual cognitive functions. Professor Paik said, “Our findings suggest that innate cognitive functions can emerge spontaneously from the statistical complexity embedded in the hierarchical feedforward projection circuitry, even in the complete absence of learning”. He continued, “Our results provide a broad conceptual advance as well as advanced insight into the mechanisms underlying the development of innate functions in both biological and artificial neural networks, which may unravel the mystery of the generation and evolution of intelligence.” This work was supported by the National Research Foundation of Korea (NRF) and by the KAIST singularity research project. -Publication Seungdae Baek, Min Song, Jaeson Jang, Gwangsu Kim, and Se-Bum Baik, “Face detection in untrained deep neural network,” Nature Communications 12, 7328 on Dec.16, 2021 (https://doi.org/10.1038/s41467-021-27606-9) -Profile Professor Se-Bum Paik Visual System and Neural Network Laboratory Program of Brain and Cognitive Engineering Department of Bio and Brain Engineering College of Engineering KAIST